Operations | Monitoring | ITSM | DevOps | Cloud

November 2022

Search Observability Data In-Place: Store Where You Want, Query When You Want

When we created Cribl Search, we wanted to give systems administrators the ability to query data without having to spend resources on collection and processing first — but we didn’t stop there. With Search, we’re also making it possible to query all the data you’ve already collected, processed, and kept in places like object stores, file systems, analytics tools, S3 buckets, or other data stores.

Logz.io's New Features: Easier, Faster, and More Cost-Efficient Observability

Our product strategy this year was relatively simple. Many observability practitioners we spoke with complained that observability was oftentimes slow, heavy, complex, and costly – which can be summed up in our CEO’s recent blog on modern observability challenges. While our customers didn’t report similar challenges, we wanted to further distance ourselves from this typical observability experience.

No more "I don't knows."

A Complete Guide to PostgreSQL Performance Tuning: Key Optimization Tips DBAs Should Know

PostgreSQL is an open-source relational database that is highly flexible and reliable and offers a varied set of features. Even though it is a complex database, it provides great integrity and performance. Also, you can deploy it on multiple platforms, including a light version for websites and smartphones. Because you can deploy Postgres in different ways, it comes out of the box with only some basic performance tuning based on the environment you’re deploying on.

Cribl Search: The Most Powerful Tool for Querying Data at Its Source

One of the most useful features of Cribl’s flagship solution Stream is its ability to separate the wheat from the chaff in your data’s journey from source to destination — Stream allows you to control what data goes to what system, Cribl Search, takes this to the next level by controlling what data should be collected before it is ever put in motion.

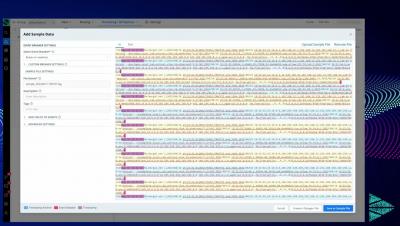

Oh....The Things You Can Test with Built-in Data Generators in Cribl Stream

Picture this! The coffee is hot, the keyboard is ready to rock, the bandwidth is unused, and the software is deployed (or the cloud is waiting patiently)…. but the data is missing! That’s right, most of us have been there. In our industry, it is very common for data to be the lowest common denominator for many projects.

Announcing Logz.io Open 360: One Platform for Open Source Observability

Today, I’m thrilled to announce the introduction of Logz.io Open 360™. This is a major step in our journey. Open 360 is a unified platform for modern engineering teams requiring end-to-end observability across logs, metrics and traces—delivered in an intuitive user interface. Open 360™ is specifically designed to enable engineers to have deep monitoring and insights into distributed systems.

Getting started with unified observability for AWS in less than 10 minutes using terraform

When Stream Meets Lake: Cribl Integrates With New Amazon Security Lake to Help Customers Address Data Interoperability

We’re excited to announce that Cribl integrates with Amazon Security Lake. Amazon Security Lake allows customers to build a security data lake from integrated cloud and on-premises data sources as well as from their private applications using the Open Cybersecurity Schema Framework (OSCF).

New AWS services? No problem! How Sumo Logic is evolving to meet your AWS observability needs

Track and triage errors in your logs with Datadog Error Tracking

Reducing noise in your error logs is critical for quickly identifying bugs in your code and determining which to prioritize for remediation. To help you spot and investigate the issues causing error logs in your environments, we’re pleased to announce that Datadog Error Tracking is now available for Log Management in open beta.

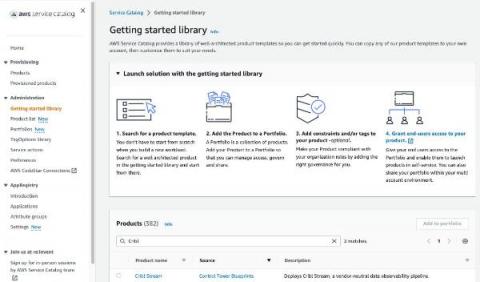

Cribl Supports Multiple AWS Account Monitoring and Analytics with New Account Factory Customization

Keeping with our mission of helping customers gain radical levels of choice and control with their observability data, we’re excited to announce full support for the Amazon Web Services (AWS) Account Factory Customization solution within AWS Control Tower console. Customers can now use AWS Control Tower to define account blueprints that scale their multi-account provisioning in a streamlined manner.

Ubuntu Logs: How to Check and Configure Log Files

Ubuntu provides extensive logging capabilities, so most of the activities happening in the system are tracked via logs. Ubuntu logs are valuable sources of information about the state of your Ubuntu operating system and the applications deployed on it. The majority of the logs are in plain text ASCII format and easily readable. This makes them a great tool to use for troubleshooting and identifying the root causes associated with system failures or application errors.

Making the Most of CloudWatch Log Insights: 7 Best Practices

Amazon CloudWatch provides Log Insights, a feature that can help you: CloudWatch Log Insights uses a proprietary query language with several basic commands. It provides sample queries for common AWS service log types, as well as query auto-completion. Learn more about CloudWatch Log Insights capabilities and how to use them.

Going Beyond Infrastructure Observability: Meta's Approach

What’s the ultimate goal of bringing observability into an organization? Is it just to chase down things when they’re broken and not working? Or can it be used to truly enable developers to innovate faster? That’s a topic I recently discussed with David Ostrovsky, a software engineer at Meta, the parent company of social media networks Facebook and Instagram among others. He was my guest on the most recent episode of the OpenObservability Talks podcast.

The Basics of Using AWS EventBridge for Observability

Why Centralized Log Management? Understanding the Use Cases

Centralized log management provides various benefits across an organization. The fundamentals of log management offer a wide variety of business use cases. Whether you’re managing event log data manually or realizing you need more than an Open Source solution, finding the right internal champions can make your life easier. Understanding the business use cases and strategic impact centralized log management provides can help you gain the internal buy-in you need.

Using the Built-in Data Generators in Cribl Stream

Can Observability Push Gaming Into the Next Sphere?

The gaming industry is an extensive software market segment, reaching over $225 billion US in 2022. This staggering number represents gaming software sales to users with high expectations of game releases. User acquisition takes up a large part of software budgets, with $14.5 billion US spending globally in 2021. User retention is critical to the success of any game, especially where monetization requires driving in-app purchases and ad revenue.

Python Data Analysis for Finance: Analyzing Big Financial Data

Python has staked its claim as the most popular programming language among developers worldwide. Accessible via Windows, Linux, and Mac, it’s intuitive and easy to read, and its use of maths lends itself perfectly to Python for finance and data analysis.

Wait... Elastic Observability monitors metrics for AWS services in just minutes?

The transition to distributed applications is in full swing, driven mainly by our need to be “always-on” as consumers and fast-paced businesses. That need is driving deployments to have more complex requirements along with the ability to be globally diverse and rapidly innovate.

Cribl Search: An Innovative New Way to Search Observability Data

These days, administrators typically have to deploy multiple tools to search through all of their datasets – then they get to spend the little free time they have left over dreaming of a world where they could search multiple distributed datasets simultaneously, similar to existing web search tools. They might have one tool for Splunk, another for Elastic, and some may even still be using grep or some other cumbersome function to search non-correlated data.

RedHat OpenShift monitoring with Splunk's OpenTelemetry Operator

observIQ awarded Fall 2022 Intellyx Digital Innovator Award

Eating Our Own Goat Food: Using Our Own Products

Here at Cribl, we’re big on GoatFooding. We not only prepare but consume our own products, in our own products. Today we’ll pull back the curtains to shine a light on how we use Cribl products within our Cribl.Cloud service. Cribl is a pioneer in the observability space, so what better way to use our products than by observing Cribl.Cloud?

Prepare your IT systems for Black Friday with best practices and strategies from Ulta Beauty

Searching Observability Data Just Became Point & Shoot

The traditional approach for searching observability data is a tried-and-true: Once all the search staging is accomplished, we can perform high-speed, high-performance, deep-dive analysis of the data. But is this the best way or even the only way to search all that observability data? The answer to the first question is maybe, as it depends on what you are trying to accomplish. The answer to the second question must be a resounding no.

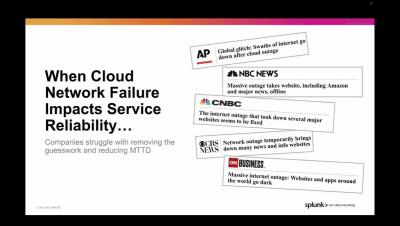

What Is Splunk & What Does It Do? An Introduction To Splunk

Hi! We’re Splunk, and we’re glad you’re visiting us today. Honestly, we hear from people far and wide about “What does Splunk do?”, “Does the name Splunk mean something?” And of course, “How can I learn Splunk?” I wrote this article to help answer all these questions for you and point you towards whatever question you want answered.

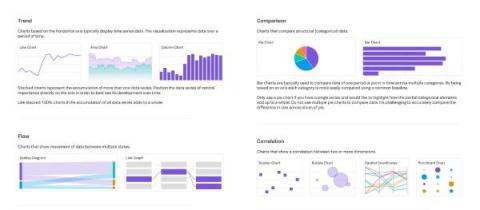

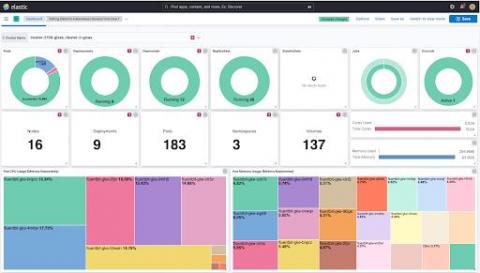

Dashboard Design: Visualization Choices and Configurations

There are many visualization types and configurations available to choose from. In general, keep your visualizations as simple and straightforward as possible to avoid distraction and highlight only the most important information. If there is too much unnecessary information on the page it can be overwhelming and focus can be misdirected to unimportant details.

Elasticsearch Tutorial | Getting Started Guide for Beginners - Sematext

How to design a microservices architecture with Docker containers

Composite availability: calculating the overall availability of cloud infrastructure

Understand how to calculate the composite reliability of your cloud infrastructure to help design Cloud architectures with an optimal SLA.

Independence with OpenTelemetry on Elastic

The drive for faster, more scalable services is on the rise. Our day-to-day lives depend on apps, from a food delivery app to have your favorite meal delivered, to your banking app to manage your accounts, to even apps to schedule doctor’s appointments. These apps need to be able to grow from not only a features standpoint but also in terms of user capacity. The scale and need for global reach drives increasing complexity for these high-demand cloud applications.

Grafana vs. Splunk

Are you trying to choose between Grafana and Splunk, but can't find enough information about their capabilities? In this blog, we highlight the details of why a user should select Grafana OR Splunk as part of their monitoring stack and what are the user benefits of each. Also, you can check out what it's like to make your own Grafana dashboard using our MetricFire free trial. Get onto the product in minutes and see if you prefer Grafana over Splunk.

Cribl.Cloud Is Now On AWS Marketplace!

As of 2022, 49% of enterprise workloads and data are in a public cloud, and that number is expected to increase by 6-7% over the next year. Why? With big cloud moves come big benefits: optimized performance, reduced management overhead, and cost savings on data centers. However, it also comes with the struggle to get a handle over never-ending data growth. Customers are looking to Cribl to help route and process that data at scale and need a seamless way to get started within minutes.

Data Quality Explained: Why Quality Is Critical to Using Your Data

Much like wine (😉), having data doesn’t mean you have quality data. Today it's easier than ever to get data on almost anything. But that doesn’t mean that data is inherently good data, let alone information or knowledge that you can use. In many cases, bad data can be worse than no data, and it can easily lead to false conclusions. So, how do you know that your data is reliable and productive? This is what we call data quality.

Cloud Storage gets better system observability with customizable monitoring dashboards

In-context dashboards for Google cloud storage, customizable, create alert, view logs for better storage system insights at project level and bucket level.

Reduce Data Costs: Log Sampling with OpenTelemetry and BindPlane OP

5 Reasons Why OpenTelemetry is the Future of Observability

Pipeline Profiling: Or How I Learned to Stop Worrying and Isolate the Problem

It’s that time of year again! If you’re not a procrastinator, you’ve probably already blown out your sprinklers for winter and are looking forward to the snow and holidays ahead. Well done, irrigation purists! I, on the other hand, am an olympic-level procrastinator and will usually wait until the last moment before NWS forecasts a 10″ snow for the night then frantically search for my air compressor.

The Human Element of Tech Development

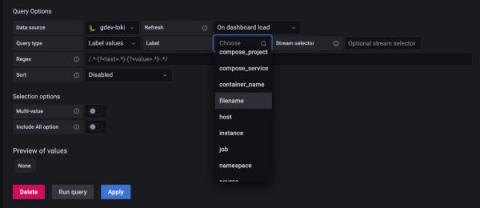

Grafana 9.2: Create, edit queries easier with the new Grafana Loki query variable editor

As part of the Grafana 9.2 release, we’re making it easier to create dynamic and interactive dashboards with a new and improved Grafana Loki query variable editor. Templating is a great option if you don’t want to deal with hard-coding certain elements in your queries, like the names of specific servers or applications. Previously, you had to remember and enter specific syntax in order to run queries on label names or values.

Edge + AppScope: Unlocking New Insights You Didn't Know Existed Was Never This Easy!

The moment has finally arrived! “Yes! I do” “Yes! I do” With great joy, I now introduce to you the newly married Edge and AppScope! Beginning the journey of a lifetime, let’s give it up for this power couple! Together they offer auto-discovery, central management, high scalability, high-fidelity data collection, and rich observability.

How Observability Pipelines Save Your Budget

Our recent blog post about observability pipelines highlighted how they centralize and enable data actionability. A key benefit of observability pipelines is users don't have to compare data sets manually or rely on batch processing to derive insights, which can be done directly while the data is in motion. As a result, teams get access to the data they need to make decisions faster.

ELK Review: ELK vs. MetricFire

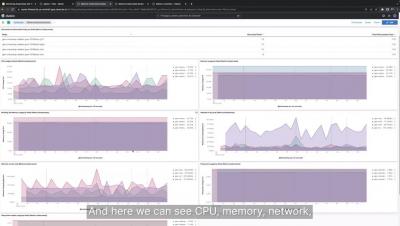

PU, memory use, latency, network bandwidth. These are just some of the monitoring metrics businesses analyze for security and performance. But successful data-driven organizations delve deeper than this. These companies probe millions of real-time metrics for unexpected insights and predict outcomes weeks, months, and years into the future. ELK helps them do this. It's a data analytics platform from open-source developer Elastic.

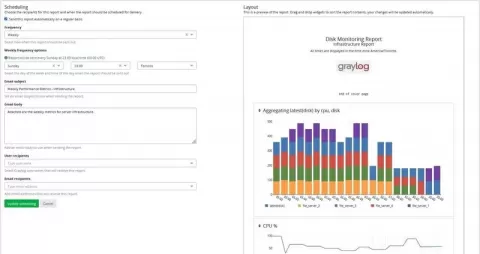

How To Install Graylog On Ubuntu

Choosing an Observability Pipeline

An observability pipeline is a tool or process that centralizes data ingestion, transformation, correlation, and routing across a business. Production engineers across ITOps, Development, and Security teams use them to more efficiently and cost-effectively transform their telemetry data to drive critical decisions. Businesses of all sizes can enjoy several benefits and gain a significant competitive advantage by implementing an observability pipeline.

RedHat OpenShift monitoring with Splunk's OpenTelemetry Operator

Ensure your Kubernetes workloads are achieving their full potential with Splunk Observability

What is Logging as a Service (LaaS)?

OpenSearchCon: Together after 18 Months

OpenSearch was created by the community for the community to continue to keep an open-source alternative to ElasticSearch and Kibana. The project has been hard at work for the last 1.5 years building, launching and iterating on this important initiative. Some remarkable milestones have been achieved, including over 5,800 stars on GitHub with 19 different community-led projects.

One Click Visibility: Coralogix expands APM Capabilities to Kubernetes

There is a common painful workflow with many observability solutions. Each data type is separated into its own user interface, creating a disjointed workflow that increases cognitive load and slows down Mean Time to Diagnose (MTTD). At Coralogix, we aim to give our customers the maximum possible insights for the minimum possible effort. We’ve expanded our APM features (see documentation) to provide deep, contextual insights into applications – but we’ve done something different.

A look under the hood at eBPF: A new way to monitor and secure your platforms

In this post, I want to scratch at the surface of a very interesting technology that Elastic’s Universal Profiler and Security solution both use called eBPF and explain why it is a critically important technology for modern observability. I’ll talk a little bit about how it works and how it can be used to create powerful monitoring solutions — and dream up ways eBPF could be used in the future for observability use cases.

Mezmo: It's Time to Set Data Free

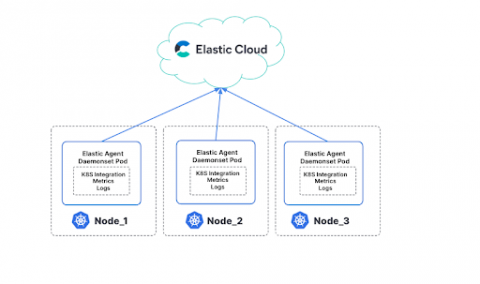

How to Monitor Kubernetes Clusters with Elastic

The Leading Sumo Logic Alternatives

Using Sumo Logic, you can analyze both metrics and logs simultaneously. Developed in 2010, this solution provides a powerful query language and scheduling support. Sumo Logic's production monitoring features provide visibility into production issues. Instead of manually writing alerts, the platform offers pre-configured alert templates (which Logit.io also offers), which makes setting up alerts easier and faster.

How to use Cribl Stream and ChaosSearch for Next-Gen Observability

How to Monitor SNMP with OpenTelemetry

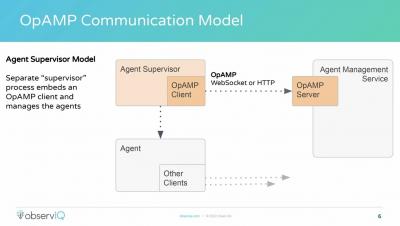

"Managing OpenTelemetry Through the OpAMP Protocol" by Mike Kelly, observIQ

The basics of observing Kubernetes: A bird-watcher's perspective

An avid bird-watcher once told me that for bird-watching beginners, it’s more important to focus on learning about the birds and identifying their unique songs rather than trying to find the perfect pair of binoculars.

How retailers are uncovering insights and driving more conversions this holiday season

This year, global ecommerce transactions are expected to grow by over 12% during the 2022 holiday season. As the industry continues to rely more on ecommerce, retailers are looking at new ways to improve customer experience and provide a safe and secure shopping journey. In an increasingly competitive space, many retailers are leveraging their data assets to accomplish more, spark innovation, create more personalized experiences, and drive higher conversion rates.

Graylog Webhook Notification to Home Assistant

geeks+gurus: How Ulta Beauty digital services shine for the holidays

AWS Lambda Telemetry API: Enhanced Observability with Coralogix AWS Lambda Telemetry Exporter

AWS recently introduced a new Lambda Telemetry API giving users the ability to collect logs, metrics, and traces for analysis in AWS services like Cloudwatch or a third-party observability platform like Coralogix. It allows for a simplified and holistic collection of observability data by providing Lambda extensions access to additional events and information related to the Lambda platform.

Sumo Logic's investment in OTel

How to Monitor MongoDB: Key Metrics to Measure for High Performance

Monitoring distributed systems like MongoDB is very important to ensure optimal performance and constant health. But even the best monitoring tool will not be efficient without fully understanding the metrics it gathers and presents, what they represent, how to interpret them, and what they affect. That’s why it is crucial not only to collect the metrics but also to understand them.

Observability Pipelines Have Never Been This Easy to Set Up, Manage, and Troubleshoot

Did you know? Baby goats stand and take their first steps within minutes of being born. There is no stopping the goats! Likewise, there’s no stopping the goats at Cribl from innovating! Our product herd is growing rapidly, and it’s time for us to share the latest and greatest about our head of the product herd – Cribl Stream. (If you haven’t figured it out yet, We love goats here at Cribl. We even have a goat mascot; meet Ian)

What's New With Cribl.Cloud: Search, BYO IdP, AWS Marketplace, and More

If you have an Amazon Prime membership, you probably got it because of fast and free shipping. And then you discovered your membership also lets you watch tv, movies, and even live sports through Prime Video. When Amazon acquired Whole Foods, your membership included grocery delivery in under 2 hours! The more I explore my Prime membership, the more I find new and exciting access to services I don’t have to sign up for.

Cribl is Redefining Search for Your Observability & Security Data

Cribl, a leader in open observability, today released Cribl Search, the first federated query engine focused on observability and security data. Search flips the observability market on its head, dispatching queries to where the data is already at rest. Cribl Search was engineered to let you search data-in-place, whether the data remains at the edge, in the stream, in the observability lake, at the endpoint, or even in existing search tools.

Livin' on the Edge With Cribl Edge 4.0: Featuring Improved Scalability, Enhanced Fleet Management, and AppScope Integration

Cribl CEO Clint Sharp first announced Cribl Edge in March of 2022. Our SVP of Marketing, Abby Strong, complemented the announcement with a well-rounded blog post discussing why Cribl Edge is the first fully manageable and auto-configurable agent designed to collect telemetry data at scale. Even Aerosmith gave the product a shoutout! Well, not really, but wouldn’t it be fun if it was true? 🙂 We’re thrilled to be back with exciting news about the latest release of Cribl Edge (4.0).

Cribl's New User Interface: Simple, Accessible, and User-Friendly

The last year has been HUGE for us here at Cribl; we’ve seen explosive growth across our business. For those of us in the Product teams, one of the most exciting areas of growth has been the launch of two new ground-breaking products. First, we launched the first fully manageable and auto-configurable agent designed to collect telemetry data at scale – Cribl Edge, which enables customers to move data collection, processing, and routing out into the data source itself.

Advancing Observability: Cribl Search and New Product Enhancements Available Today

Product launch day is our favorite here at Cribl. It’s the culmination of hard work from our entire team and, better yet, the first time our customers get their hands on our latest innovations. And today is a big one. Our newest product, Cribl Search, is now generally available on Cribl.Cloud.

Upgrade Graylog 4.3 With Elasticsearch to OpenSearch

Cribl's Fall Launch: Beyond the Pipeline

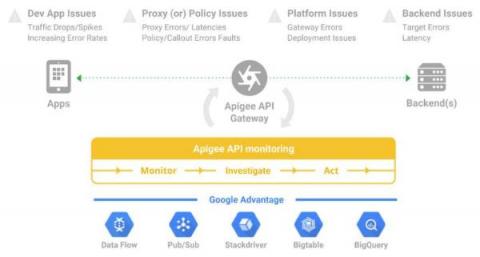

3 best practices to reduce application downtime with Google Cloud's API monitoring tools

Maintain high uptime and performance for your APIs without any overheads using Google Cloud’s API monitoring tools.

Observability is Still Broken. Here are 6 Reasons Why.

In an era where there’s no shortage of established best practices and tools, engineering teams are consistently finding their ability to prevent, detect and resolve production issues is only getting harder. Why is this the case? Our most recent DevOps Pulse Survey highlighted alarming trends to this end.

Splunk Platform Demo

The Hidden Cost of Overlogging

Observability Data Documentation Best Practices

A few weeks back, I got the chance to sit down with our very own Jordan Perks from the Cribl Customer Success Team. Jordan is an Observability subject matter expert AND knows a thing or two about Cribl Products! After geeking out a bit about data best practices, we started chatting about enabling our customer champions to have different conversations with stakeholders across their organizations. When someone becomes an observability engineer, they step into a much different role.

Introducing a more complete logs forwarding experience

One of the key attributes of DevOps and SRE engineers is their ability to meticulously observe and monitor all of their applications. A task which can be achieved more efficiently by centralizing all generated logs to a central endpoint. By centralizing logging, engineers can, at any time, have an accurate overview of all events which take place across their applications, from just one place. Storing logs in an external system also allows companies to ensure compliance with many certifications.

10 things you should know about using AWS S3

How to decide on self-hosted vs managed Apache Airflow

What Is Observability?

In today's complex, multi-cloud environments, IT and engineering teams are under increasing pressure to respond to errors affecting their entire system. Therefore, IT operations, DevOps, and SRE teams are all striving to gain complete observability across these increasingly complex and diverse computing environments. But what exactly does observability mean?

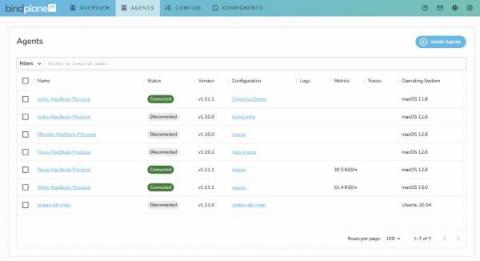

observIQ Announces Enterprise Edition of Open Source Observability Pipeline BindPlane OP

Modern Canadian MSSP drives next-gen MDR with Logz.io and Tines

Today’s Managed Security Service Providers (MSSPs) are trying to grow their business quickly, improving margins and onboarding customers with high-quality tool sets that scale with the business. This means reducing cost, improving onboarding time and building the next generation of Managed Detection and Response (MDR) to deal with threats that are increasing in volume and sophistication.

BindPlane OP Enterprise Reaches GA

How to Index and Process JSON Data for Hassle-free Business Insights

Sematext Updates Review Ep. 1 | Features and Product Updates Overview

OpenTelemetry, Auto-Instrumentation and Splunk Observability Cloud: A Jump Start

Have you been meaning to learn about OpenTelemetry and the integration of all available application and service telemetry? If you like to learn things by doing; get ready to dive in and have some fun with OpenTelemetry and Splunk Observability Cloud. Quickly learn more about OpenTelemetry auto-instrumentation and collectors at your own pace with these walkthroughs and guides.

Platform Engineering: DevOps Evolution or a Fancy Re-name?

Everyone’s talking about Platform Engineering these days. Even Gartner recently featured it in its Hype Cycle for Software Engineering 2022. But what is Platform Engineering really about? Is it the next stage in the evolution of DevOps? Is it just a fancy rebrand for DevOps or SRE? As a veteran of the PaaS (Platform as a Service) discipline about a decade ago, and a DevOps enthusiast at present, I decided to delve into this topic, peel off the hype, and see what it’s about in practice.

High Five! Splunk Honored With Five TrustRadius Best Software Awards

Customers have spoken, and we’re feeling the love. Splunk has just been honored with no fewer than five “Best Software” Awards from TrustRadius! Based exclusively on customer reviews, Splunk Enterprise Security (ES) took home the top spot in three categories: Best Software for Enterprise, Best Software for Mid-Sized Businesses, and Best Software for Small Businesses.

What is Jaeger Distributed Tracing?

Distributed tracing is the ability to follow a request through a software system from beginning to end. While that may sound trivial, a single request can easily spawn multiple child requests to different microservices with modern distributed architectures. These, in turn, trigger further sub-requests, resulting in a complex web of transactions to service a single originating request.

Managing your Kubernetes cluster with Elastic Observability

As an operations engineer (SRE, IT manager, DevOps), you’re always struggling with how to manage technology and data sprawl. Kubernetes is becoming increasingly pervasive and a majority of these deployments will be in Amazon Elastic Kubernetes Service (EKS), Google Kubernetes Engine (GKE), or Azure Kubernetes Service (AKS). Some of you may be on a single cloud while others will have the added burden of managing clusters on multiple Kubernetes cloud services.