Operations | Monitoring | ITSM | DevOps | Cloud

Building a Custom Grafana Dashboard for Kubernetes Observability

Distributed systems open us up to myriad complexities due to their microservices architecture. There are always little problems that arise in the system. Therefore, engineering teams must be able to determine how to prioritize the challenges. Viewing logs and metrics of such systems enables engineers to know the shared state of the system components, thereby informing the decision-making on what challenge needs to be solved most immediately.

4 easy ways to keep your knowledge base relevant and up to date

Knowledge is king in today’s information-driven business world, as most knowledge managers like me know. Having the right information at customers' fingertips can greatly influence their perception of the customer support experience. In today’s self-service world, a well-populated knowledge base with a reliable group of contributors is not enough to create a great experience. The content must be relevant and timely.

New Features and Enhancements in SQL Server 2022

When and Why To Adopt Feature Flags

What if there was a way to deploy a new feature into production — and not actually turn it on until you’re ready? There is! These tools are called feature flags (or feature toggles or flippers, depending on whom you ask). Feature flags are a powerful way to fine-tune your control over which features are enabled within a software deployment. Of course, feature flags aren’t the right solution in all cases.

Top Incident Response Metrics & How to Use Them

Two categories a software organization should always strive to improve in are: Data analysis is one way that your organization can improve the efficiency of incident management and overall application quality. However, the questions remain – which metrics should be collected? How can analysis of these metrics facilitate these improvements? Read on to hear about five key metrics essential to incident response.

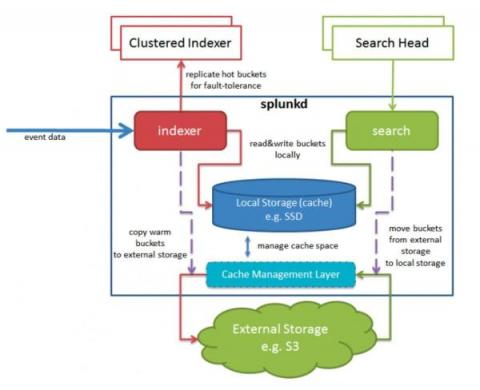

Splunk 9.0 SmartStore with Microsoft Azure Container Storage

With the release of Splunk 9.0 came support for SmartStore in Azure. Previously to achieve this, you’d have to use some form of S3-compliant broker API, but now we can use native Azure APIs. The addition of this capability means that Splunk now offers complete SmartStore support for all three of the big public cloud vendors. This blog will describe a little bit about how it works, and help you set it up yourself.

How Does Observability Help an Organization Move the Needle?

If you’re new to the concept or just trying to keep up with the conversation, Gartner defines Observability as the evolution of monitoring into a process that offers insight into digital business applications, speeds innovation and enhances customer experience. Some folks think that Observability is a new buzzword, but in fact the term was coined in 1960 by Rudolf E. Kalman, a Hungarian-American engineer.

What Are Control Flow Statements?

Control flows are the backbone of automation. Identifying what to do with a set of data – and how – is a key component of high-value automation, but it can also be confusing to wrap your head around at first. What is a conditional? And what does it have to do with a loop? How do you deal with a set of information versus a single data point?

How to Build Multi-Arch Docker Images

With ARM based dev machines and servers becoming more common, it is become increasingly important to build Docker images that support multiple architectures. This guide will show you how to build these Docker images on any machine of your choosing.