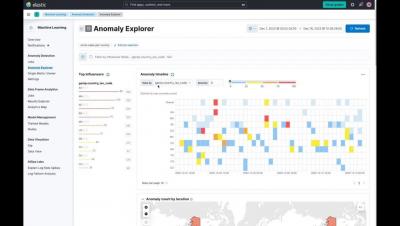

Communicating Context Across Splunk Products With Splunk Observability Events

When an IT or Security issue impacts a development team’s software how are they notified? Is your organization still relying on mass emails that lack context and most engineers have probably already filtered out of their inbox? Communicating between siloed tools and teams can be difficult. How would you like to put IT, Security, legacy processes, and business notifications specific to development teams right into one of their most important tools? Now you can!