Setting Up a Data Loop using Cribl Search and Stream Part 2: Configuring Cribl Search

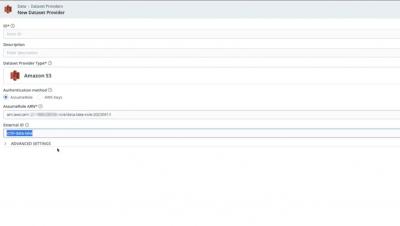

In the second video of our series, we delve into the nuts and bolts of configuring Cribl Search to access the data that we've stored in the S3 bucket. The video guides you step-by-step through the process of configuring the Search S3 dataset provider by using the Stream Data Lake destination as a model for the authentication information. From there, we proceed to walk through the process of creating a Dataset to access the Provider that we've just established. To wrap things up, we demonstrate how to search through the test data that we've previously stored in the S3 bucket.