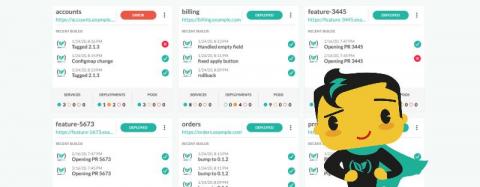

Announcing a new dashboard for Deployment Environments

One of the central ideas behind the Codefresh GUI is giving as much information as possible to both developers and operators regarding the status of a deployment. Just because a pipeline has finished successfully does not always mean that the respective environment is healthy.