Operations | Monitoring | ITSM | DevOps | Cloud

Getting started with OpenTelemetry on Kubernetes

OpenTelemetry is an instrumentation standard for application monitoring - both for monitoring metrics & distributed tracing. In this blog, we take you through a hands on guide on how to run this on Kubernetes.

Using Observability as a Proxy for Customer Happiness

Today, users and customers are driven by response rates to their online requests. It’s no longer good enough to just have a request run to completion, it also has to fit within the perceived limits of “fast enough”. Yet, as we continue to build cloud-native applications with microservice architectures, driven by container orchestration like Kubernetes in public clouds, we need to understand the behavior of our system across all aspects, not just one.

OpenTelemetry, Open Collaboration

OpenTelemetry — the merger of OpenCensus and OpenTracing — appeared in May of 2019, led by companies like Omnition (now a part of Splunk), Google, Microsoft, and others who are pushing the curve on observability. OpenTelemetry is a project within the Cloud Native Computing Foundation (CNCF) that has gathered contributors and supporters far and wide, becoming one of the most active projects found in open source today. It’s currently #2 behind only Kubernetes!

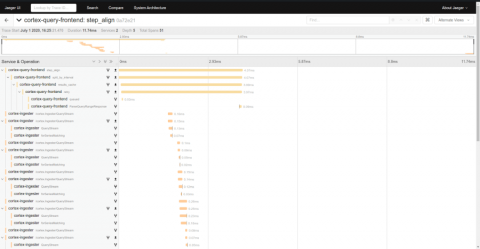

Where did all my spans go? A guide to diagnosing dropped spans in Jaeger distributed tracing

Nothing is more frustrating than feeling like you’ve finally found the perfect trace only to see that you’re missing critical spans. In fact, a common question for new users and operators of Jaeger, the popular distributed tracing system, is: “Where did all my spans go?” In this post we’ll discuss how to diagnose and correct lost spans in each element of the Jaeger span ingestion pipeline.

Distributed Tracing & Logging - Better Together

Distributed Tracing Tools and New Industry Standards

Metrics and logs have been around for a long time, yet we haven’t adopted common standards for them. Sure, there have been attempts on the metric side with OpenMetrics. Similarly, tracing only got a standardization effort with OpenTracing just a few years ago. There was no effort in a unified approach to standardize all observability signals until OpenTelemetry began a little less than two years ago. And there has been a need.

Elastic introduces OpenTelemetry integration

We are pleased to announce the availability of the Elastic OpenTelemetry integration — available on Elastic Cloud, or when you download Elastic APM. This integration continues our to openness by embracing open standards support for the evolving OpenTelemetry standard for observability

Dogfooding Chronicles: Thinking Backward, Moving Forward

Zac Propersi, Engineering Manager at Sentry, can tell when a page is not loading as fast as it should — just by looking at it. While working on our new Metric Alerts feature, Zac noticed that the alerts pages were rendering slowly. Being the super Sentry user that he is, he wrote a custom query in Discover to see just how slow the transactions were.

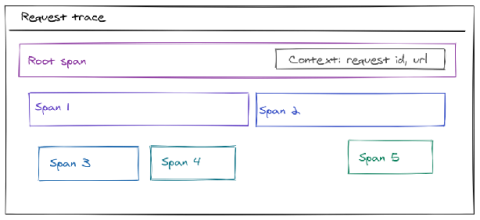

OpenTracing for Go projects

Large-scale cloud applications are usually built using interconnected services that can be rather hard to troubleshoot. When a service is scaled, simple logging doesn’t cut it anymore and a more in-depth view into system’s flow is required. That’s where distributed tracing comes into play; it allows developers and SREs to get a detailed view of a request as it travels through the system of services.