Time-based scaling of Enterprise Search on Elastic Cloud

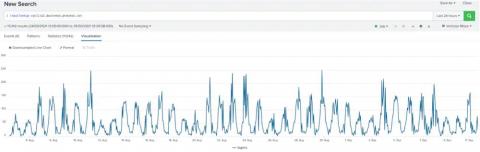

Does your Elastic Enterprise Search Cloud deployment follow a predictable usage pattern? You can automatically scale up and down your deployment on a schedule to achieve optimal performance and reduce operating costs. In this article we show you how to use the Elastic Cloud API to change how many Enterprise Search nodes you’re running. We call these APIs from a cron job to achieve hands-free, time-triggered autoscaling.