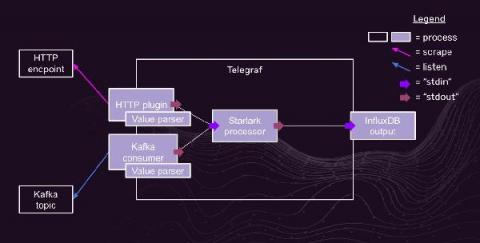

How to manage Elasticsearch data across multiple indices with Filebeat, ILM, and data streams

Indices are an important part of Elasticsearch. Each index keeps your data sets separated and organized, giving you the flexibility to treat each set differently, as well as make it simple to manage data through its lifecycle. And Elastic makes it easy to take full advantage of indices by offering ingest methods and management tools to simplify the process.