Operations | Monitoring | ITSM | DevOps | Cloud

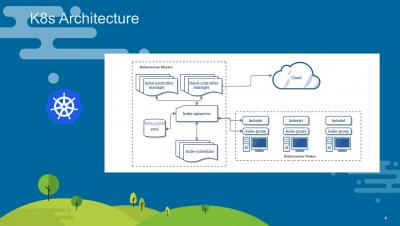

Kubernetes Rolling Update Configuration

Deployment controllers are a type of Pod controller in Kubernetes. They provide fine-grained control over how its pods are configured, how updates are performed, how many pods should run, and when pods should be terminated. There are many resources available for how to configure basic deployments, but it can be difficult to understand how each option impacts how rolling updates are performed.

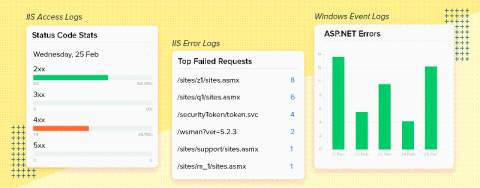

Three ways to debug IIS web server failures using logs

Unresponsive and slow pages are both terrible for any website. Even with the best user interface (UI), unresponsive and slow pages negatively affect the customer experience and the brand's reputation. Research from the Nielsen Norman Group has determined that the average user will leave a site after about 10 seconds of waiting for a page to load. If your page takes longer than a few seconds to load, it's time you check your IIS server logs.

Derdack

UI/UX Updates: Faster and Smoother Sample Navigation in AppSignal

Today, we’re bringing you an update of the performance/exceptions sample page. This update includes a number of improvements that will help you navigate and filter the available samples faster and more smoothly. We’re bringing these changes as an iteration of sample navigation improvements that we launched a while ago. We received valuable feedback from our users: the overlay made the navigation choppy instead of fluent.

How to configure Grafana as code

Grafana dashboards can do a lot, but do you know how much more you can get out of them by configuring them as code? That was the topic of a recent FOSDEM 2020 talk by Grafana software developer Malcolm Holmes and Julien Pivotto, an open source consultant at Inuits. In their presentation, the pair discussed Grafonnet (a Jsonnet library to generate Grafana dashboards), provided tips and tricks about how to use it efficiently, and explained how to fully manage your Grafana instances from code.

The Best Resources for Learning Kubernetes

Kubernetes is the world’s leading container orchestration platform. Its cloud agnostic status enables you to manage your workloads with ease, whether they reside in the cloud or on-premises. It has reduced the necessity of being locked into services provided by a cloud provider as well as the need for an entire operations team to manage large workloads on-premises on virtualization platforms.