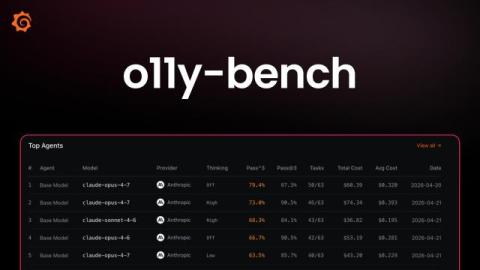

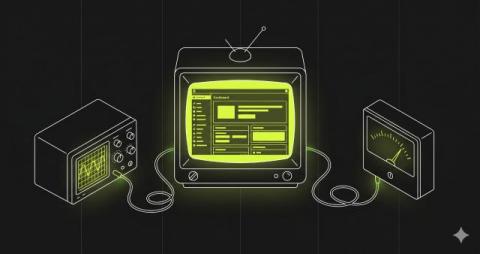

Introducing o11y-bench: an open benchmark for AI agents running observability workflows

Evaluating agents is hard. Verifying observability tasks is harder. Yes, AI agents have gotten dramatically and quantifiably better at coding and tool use, but observability presents a different kind of challenge. In a real incident, the hard part is rarely just writing a query. It's deciding which signal matters, figuring out whether a spike is noise or symptom, correlating metrics with logs and traces, and sometimes making a change in Grafana without breaking the dashboard another engineer depends on.