Operations | Monitoring | ITSM | DevOps | Cloud

Understanding Distributed Tracing with a Message Bus

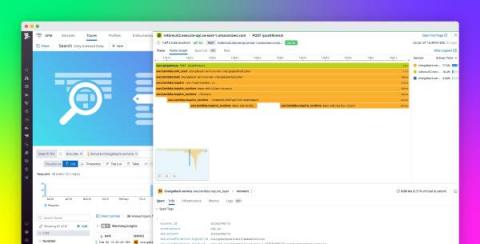

So you're used to debugging systems using a distributed trace, but your system is about to introduce a message queue—and that will work the same… right? Unfortunately, in a lot of implementations, this isn't the case. In this post, we'll talk about trace propagation (manual and OpenTelemetry), W3C tracing, and also where a trace might start and finish.

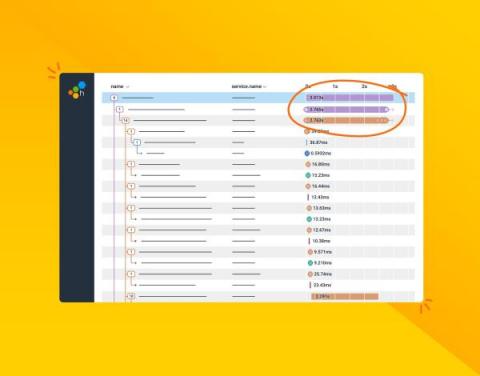

How 3 Companies Implemented Distributed Tracing for Better Insight into Their Systems

Distributed tracing enables you to monitor and observe requests as they flow through your distributed systems to understand whether these requests are behaving properly. You can compare tiny differences between multiple traces coming through your microservices-based applications every day to pinpoint areas that are affecting performance. As a result, debugging and troubleshooting are simpler and faster.

Implementing Distributed Tracing in a Java application

OpenTelemetry Architecture - Understanding the design concepts

Distributed Tracing: A Complete Guide

Any application you build has three distinct layers - a server-side interface, a client-side interface and the central codebase. In a monolith application, all these programs are written in a single language, and placed in the same web stack as well. Earlier web applications were written this way.

Introducing OpenTelemetry Support: Take Action on Your Observability Data

As an open source company that grew out of a side project in 2008 to an application and performance monitoring platform (APM) used by over 3.5 million developers, Sentry is committed to open source and the community of developers maintaining and building in the open. Similarly, we take a public approach to building our software, which is why it’s a natural extension of our values to announce our support for OpenTelemetry (or OTel), the leading open standard for observability.

How to achieve distributed tracing using Application Insights?

OpenTelemetry on AWS, beyond instrumentation and into resource attributes

Instrumenting your code is essential to understanding your system’s performance and diagnosing issues as they arise. Traditionally, this was accomplished using proprietary vendor libraries, causing major lock-in. Enter OpenTelemetry. OpenTelemetry is an open-source project that provides a set of APIs, SDKs, and integrations for instrumenting code.

Understand serverless function performance with Cold Start Tracing

Serverless developers are undoubtedly familiar with the challenge of cold starts, which describe spikes in latency caused by new function containers being initialized in response to increasing traffic. Though cold starts are usually rare in production deployments, it’s still important to understand their causes and how to mitigate their impact on your workload.