Operations | Monitoring | ITSM | DevOps | Cloud

Troubleshooting Common Kafka Conundrums

This is the third blog in our series on Kafka, where we continue to explore the nuances of deploying Kafka for scale. In our previous blogs, Essential Metrics for Kafka Performance Monitoring and Auto-Instrumenting OpenTelemetry for Kafka, we laid the foundation for understanding Kafka’s performance and monitoring aspects. Now, as we explore further into the Kafka ecosystem, we’re here to tackle the common challenges that can arise during deployment and scaling.

Essential Metrics for Kafka Performance Monitoring

Apache Kafka is an open-source distributed streaming system that has grown in popularity and usage across the technology industry. Originating from LinkedIn and now part of the Apache Software Foundation, Kafka provides a robust and scalable platform. It’s uniquely designed with an architecture that includes both a storage layer and a compute layer.

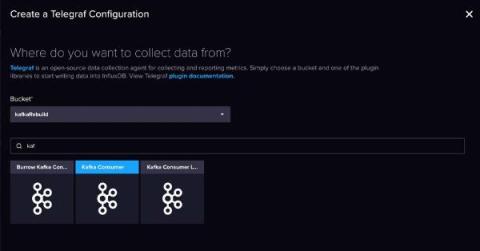

Build a Data Streaming Pipeline with Kafka and InfluxDB

InfluxDB and Kafka aren’t competitors – they’re complimentary. Streaming data, and more specifically time series data, travels in high volumes and velocities. Adding InfluxDB to your Kafka cluster provides specialized handling for your time series data. This specialized handling includes real-time queries and analytics, and integration with cutting edge machine learning and artificial intelligence technologies. Companies like as Hulu paired their InfluxDB instances with Kafka.

Auto-Instrumenting OpenTelemetry for Kafka

Apache Kafka, born at LinkedIn in 2010, has revolutionized real-time data streaming and has become a staple in many enterprise architectures. As it facilitates seamless processing of vast data volumes in distributed ecosystems, the importance of visibility into its operations has risen substantially. In this blog, we’re setting our sights on the step-by-step deployment of a containerized Kafka cluster, accompanied by a Python application to validate its functionality. The cherry on top?