How AI Turns Monitoring From "What Now?" Into "What's Next?"

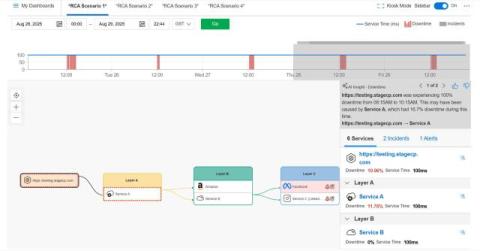

It's 3 AM. Your phone starts buzzing with alerts, and you stumble to your laptop only to be greeted by a dashboard that looks like the control panel of a nuclear reactor in meltdown: Red lights everywhere. Numbers that should be green are decidedly not green. And your brain, still foggy from sleep, is asking the most fundamental question in all of IT operations: "Okay, yes, there's clearly a problem... but, now what?".