What your product data is actually saying

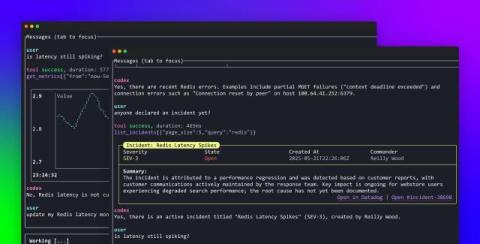

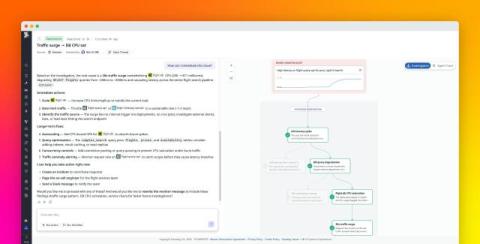

As tools such as AI agents become more integrated with the instrumentation, governance, and centralization of product analytics data, product managers (PMs) still own the meaning of those events and the connected outcomes. Knowing when to trust the data, forming strong hypotheses, and being able to act on the insights requires an expert in the loop.