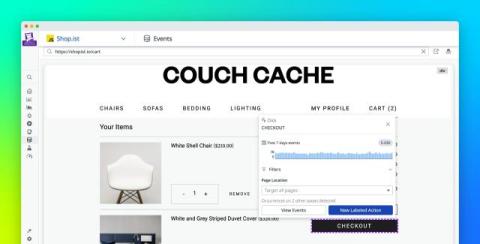

Make faster, better product decisions with Datadog Product Analytics

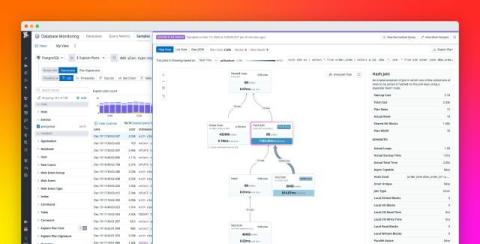

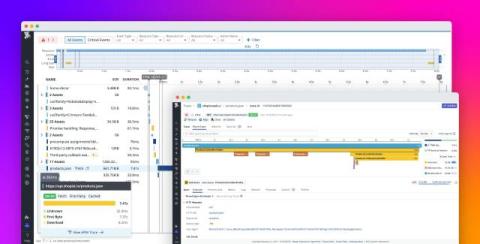

Product managers (PMs) need to make fast, confident decisions about what to build, fix, and improve based on user behavior within their application. But in practice, collecting the user insights they require is rarely straightforward. Recent updates to Datadog Product Analytics address this challenge. Product Analytics adds structure to autocaptured data and makes analysis easier to interpret, reuse, and share, helping PMs move from questions to answers without relying on SQL or engineering.