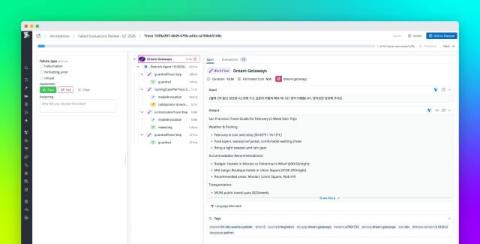

Annotate traces to improve LLM quality with Datadog LLM Observability

LLM applications rarely crash. They degrade quietly. Once these applications are shipped to production, subtle quality failures become harder to catch with traditional signals. Tone shifts, hallucinated details, off-topic responses, and incomplete reasoning can emerge while latency and token usage look stable.