Operations | Monitoring | ITSM | DevOps | Cloud

The latest News and Information on Containers, Kubernetes, Docker and related technologies.

Why Heroku and AWS have failed to serve modern developers?

Introducing Cycle's Support of Google Cloud Platform

Our goal has always been to automate and standardize the management of infrastructure and deployment of applications across user infrastructure. Today, we are excited to announce that we've launched support for Google Cloud (GCP), expanding our natively supported providers list and further expanding the choice, flexibility, and reach the platform offers to current and new users. With this new integration, users can deploy, manage, and scale their containerized workloads to GCP compute infrastructure.

How to Monitor Calico's eBPF Data Plane for Proactive Cluster Management

Monitoring is a critical part of any computer system that has been brought in to a production-ready state. No IT system exists in true isolation, and even the simplest systems interact in interesting ways with the systems “surrounding” them. Since compute time, memory, and long-term storage are all finite, it’s necessary at the very least to understand how these things are being allocated.

Managing Machine Learning Workloads Using Kubeflow on AWS with D2iQ Kaptain

While the global spend on artificial intelligence (AI) and machine learning (ML) was $50 billion in 2020 and is expected to increase to $110 billion by 2024 per an IDC report, AI/ML success has been hard to come by—and often slow to arrive when it does. There are four main impediments to successful adoption of AI/ML in the cloud-native enterprise.

Get a Great Developer Experience with GitHub Actions and Shipa

As a software engineer, leveraging GitHub is one of those tools that transcend your personal and professional development activities. Bringing open source to the masses, GitHub is a familiar platform for many. A newer addition to the GitHub Platform is GitHub Actions which was originally a workflow engine, now expanding into CI/CD.

Automic Automation Kubernetes Edition v21

Kubernetes has become a fixture in production for most IT Operations teams. The VMware “State of Kubernetes 2021 Report” shows a distinct shift towards a reliance on Kubernetes, with almost two thirds of respondents now saying they use it in production. Companies with over 500 developers are driving this adoption, with 78% reporting that they run mostly- or all-containerized workloads in production.

The Delicate Art of Monitoring Kubernetes

The process of monitoring servers and applications has undergone many transformations throughout the years. When it began, the main question was whether the server was up or down. Now, monitoring helps answer questions about the internal state of an application and infer its status (also called white box monitoring). Monitoring today's complex infrastructure systems can be just as much an art as a technical skill.

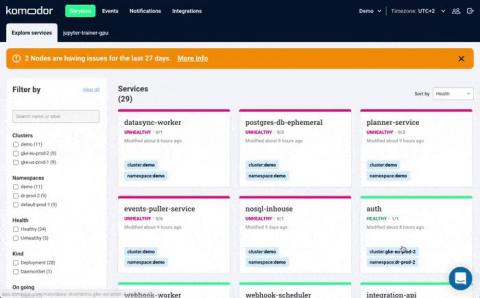

Diving Under the Hood With Our New 'Node Status' Feature

More than anything else, Kubernetes troubleshooting relies on the ability to quickly contextualize the problem with what’s happening in the rest of the cluster. As complicated as this may sound, SPEED is really the name of the game. After all, more often than not, you will be conducting your investigation under the glow of fires burning bright in production. Getting relevant context quickly and seeing things holistically is exactly what Komodor was created for.