Operations | Monitoring | ITSM | DevOps | Cloud

Using Elastic machine learning rare analysis to hunt for the unusual

It is incredibly useful to be able to identify the most unusual data in your Elasticsearch indices. However, it can be incredibly difficult to manually find unusual content if you are collecting large volumes of data. Fortunately, Elastic machine learning can be used to easily build a model of your data and apply anomaly detection algorithms to detect what is rare/unusual in the data. And with machine learning, the larger the dataset, the better.

Only Autonomous Anomaly Detection Scales

Say you’re looking for a smart product to detect anomalies in your organization’s IT environment. A sales rep drops by and shows you all kinds of great artificial intelligence (AI) features with fancy-sounding algorithms. It sounds very impressive and seems like there is a lot of very valuable AI in the product. But, in fact, the opposite is true. This is a manual AI product wrapped in a deceiving jacket. Let me tell you more.

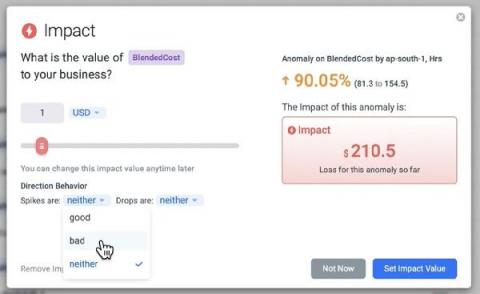

Introducing: Business Impact Alerts

Anodot is the only monitoring solution built from the ground up to find and fix key business incidents, as they’re happening. As opposed to most monitoring solutions, which focus on machine and system data to track performance, Anodot also monitors the more volatile and less predictable business metrics that directly impact your company’s bottom line. Now there’s an easy way to measure the business impact of every incident.

Outlier Detection: The Different Types of Outliers

How Xandr, AT&T's Adtech Company, Prevents Revenue Loss with Autonomous Business Monitoring

Anodot CEO and Co-Founder David Drai joined Amazon Web Services and Xandr to discuss the shift to machine learning-based anomaly detection in business monitoring. Xandr Chief Technology Officer Ben John shared how their advertising marketplace is using Anodot platform to cut detection from “up to a week to less than a day”. You can watch the webinar at the link above or read on for the highlights of that talk.

Anomaly detection 101

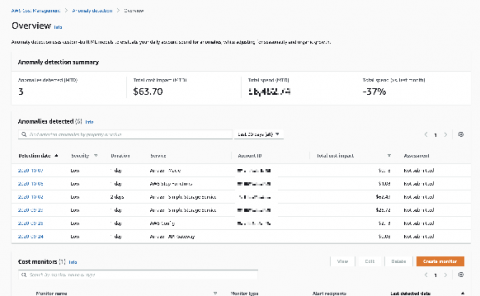

AWS Cost Anomaly Detection: One Element of Cloud Cost Intelligence

Every decision that an engineer makes in the cloud impacts cost. Yet we know that engineers aren’t cost experts, and many worry that asking them to care about cloud cost will slow them down and distract them from delivering customer value. Top cloud-native companies dedicate entire teams of engineers to build custom tools to measure unit cost and deliver cloud cost to engineering teams. But I’m guessing you don’t have eight engineers you can spare to build internal cost tools?

9 Key Areas to Cover in Your Anomaly Detection RFP

Evaluating a new, unknown technology is a complicated task. Although you can articulate the goals you’re trying to achieve, you’re probably faced with multiple solutions that approach the problem in different ways and highlight varying features. To cut through the clutter, you need to figure out what questions to ask in order to evaluate which technology has the optimal capabilities to get the job done in your unique setting.

Correlation Analysis: A Natural Next Step for Anomaly Detection

Over the last decade, data collection has become a commodity. Consequently, there has been a tremendous deluge of data in every area of industry. This trend is captured by recent research, which points to growing volume of raw data and growth of market segments fueled by that data growth.