This Month in Datadog - December 2025

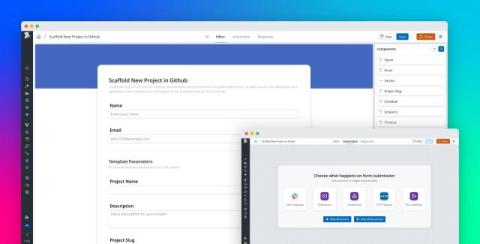

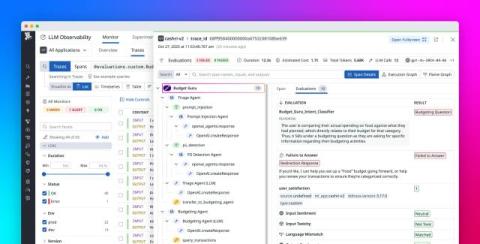

For our last episode of 2025, we’re focusing on Datadog releases announced at AWS re:Invent. Join Jeremy to see how you can manage logs at petabyte scale in your infrastructure, eliminate unneeded costs in Amazon S3 buckets, build agentic workflows, and detect credential leaks. Later in the episode, Scott spotlights how you can connect your AI agents to Datadog tools and context with our MCP Server.