Operations | Monitoring | ITSM | DevOps | Cloud

The latest News and Information on Containers, Kubernetes, Docker and related technologies.

Kubernetes Throttling Doesn't Have To Suck. Let Us Help!

In the Kubernetes (K8s) community, there is a huge misconception about CPU allocation and utilization. Even highly experienced SREs find themselves struggling with the way Kubernetes allocates CPU resources, leading to misconfigured CPU allocations and extremely negative outcomes. For starters, this results in significant quality degradation on important service components, introduced by behind-the-scenes CPU limiting (or throttling).

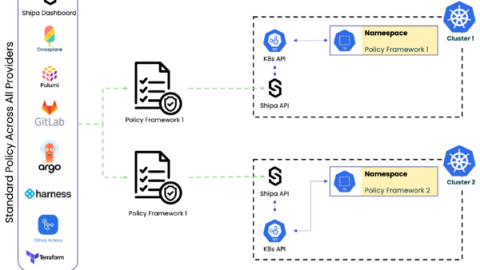

Kubernetes Multi-Tenancy

Things would be much simpler if we based all we built on Kubernetes on a single tenant type of architecture, meaning a single application running on the entire Kubernetes cluster with a single team who could access it. As teams scale Kubernetes adoption within their organizations, they see a different scenario instead, one with multi-tenant deployments performed by multiple teams and, most probably, across multiple clusters.

How to create ConfigMaps in Kubernetes - Civo Academy

Getting Hands on with Harvester HCI

When I left Red Hat to join SUSE as a Technical Marketing Manager at the end of 2021, I heard about Harvester, a new Hyperconverged Infrastructure (HCI) solution with Kubernetes under the hood. When I started looking at it, I immediately saw use cases where Harvester could really help IT operators and DevOps engineers. There are solutions that offer similar capabilities but there’s nothing else on the market like Harvester.

The top 10 AWS architecture built with Qovery in 2022

How Kubernetes dominated the containers space ft. Amazon EKS GM Chandler Hoisington

Centralized application dashboard for Kubernetes

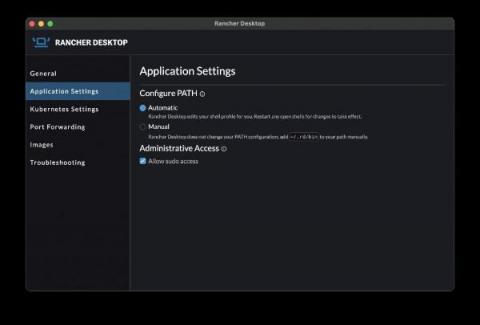

What's New in Rancher Desktop 1.3.0?

The release of Rancher Desktop 1.3.0 brings in some notable changes that are most visible on Mac and Linux while continuing to expand on experimental features.

Monitor Knative for Anthos with Datadog

Developed and released by Google in 2018 with contributions from IBM, VMWare, Red Hat, and other companies, the Knative project is designed to make it as simple as possible to build, deploy, and scale serverless containers across your existing Kubernetes infrastructure. By operating on top of Google Anthos, Knative for Anthos takes this even further by allowing developers to build and deploy applications across any hybrid environments that include both on-prem and cloud-hosted serverless clusters.