Operations | Monitoring | ITSM | DevOps | Cloud

Customers Affirm Value of Aiven Platform through G2 badges

The Future of Cloud Native Data is Now

Pepperdata Capacity Optimizer Next Gen: How Pepperdata Can Save 30% Off Your Cloud Bill

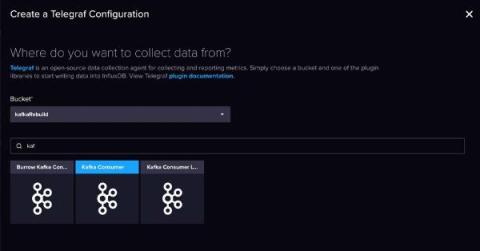

Build a Data Streaming Pipeline with Kafka and InfluxDB

InfluxDB and Kafka aren’t competitors – they’re complimentary. Streaming data, and more specifically time series data, travels in high volumes and velocities. Adding InfluxDB to your Kafka cluster provides specialized handling for your time series data. This specialized handling includes real-time queries and analytics, and integration with cutting edge machine learning and artificial intelligence technologies. Companies like as Hulu paired their InfluxDB instances with Kafka.

Using Cribl Stream to Correct Misconfigured Data in Datadog

The challenge for every organization is gathering actionable observability information from all your systems, in a timely manner, without creating a substantial operational burden for the teams managing the collection tooling. While each observability solution has its unique benefits and challenges, the one common burden expressed by teams is the management of the metadata of the metrics, traces, and logs.

Data Visualization for Everyone: How To Simplify the Process

Pick 3 for Your Data Management: Speed, Choice, and Flexibility

Data growth has significantly out-pacing budgets; the products we use, have to do more. This is where optimization comes into play. Generally, optimization is associated with reduction which may be intimidating…what if something important is reduced? How can you identify what should be reduced? Reduction isn’t about removing context, but about removing repetitive data, meaningless fields, or even flattening JSON.

Optimization Without Recommendations: Automating Your Cost Optimization on Amazon EKS

Navigating Data Overload with Cribl

So many businesses today are playing “Hungry, Hungry, (Data) Hippo,” devouring every marble of information they can get their hands on. While it seems like every company has a robust data aggregation system, what most companies don’t have is an efficient way to control what data they store and where that data goes. We all want to make data-driven business decisions, but sorting through tons of data to find useful business insights can be like finding a needle in a whole farm.