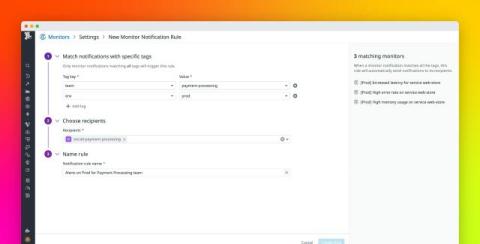

Route your monitor alerts with Datadog monitor notification rules

As organizations scale their infrastructure, monitoring systems can become a source of noise rather than insight. A clean, straightforward set of alerts for a handful of services can quickly spiral into a mess of overlapping thresholds, redundant triggers, and inconsequential notifications across hundreds (or thousands) of components. This flood of notifications can slow response times, overwhelm engineers, and increase the chance of overlooking critical problems.