k8s-monitoring-helm Chart Office Hours (March 2026)

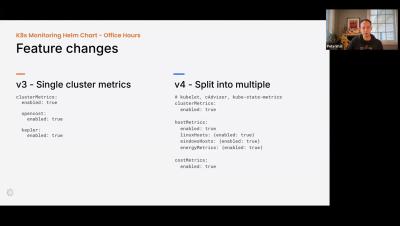

In the March edition of the Kubernetes Monitoring Helm chart office hours, we discuss the version 4.0 major release, the upcoming 4.1 release and features, and we discuss the upcoming deprecation of the 1.x and 2.0 versions.