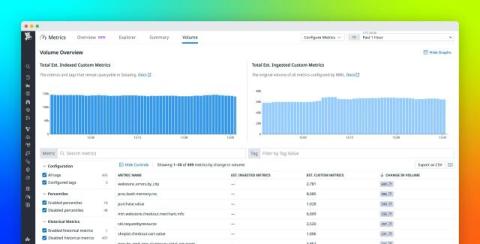

Best practices for end-to-end custom metrics governance

Custom metrics enable you to track what matters to your distinct business and services and correlate it with the rest of your telemetry data. As your organization grows by adding more teams, services, and environments, your volume of custom metrics can grow with it. To ensure critical visibility while maintaining cost efficiency, organizations need an end-to-end approach to custom metrics governance.