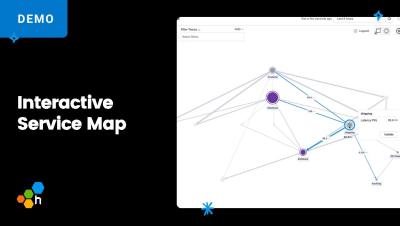

Is Your Observability Strategy Boardroom-Ready?

At LDX3 in London last week, two roundtables I hosted with engineering leaders confirmed what many of us are starting to feel: observability isn’t just important—it’s becoming essential to how modern teams navigate the pressure to move fast and stay resilient.