The Debugging Bottleneck: A Manual Log-Sifting Expedition

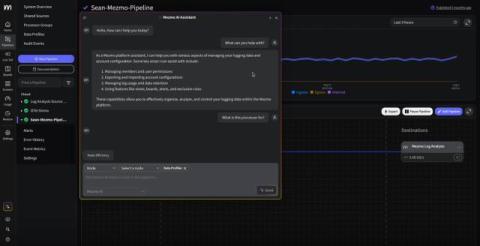

Imagine a developer at a fast-growing company. A customer support agent reports a critical issue: a user's recent order is stuck in a "pending" state. The agent provides a customer ID and a request ID. The developer's typical process is a familiar, painful dance: This process is slow, tedious, and prone to human error. The Mean Time to Resolution (MTTR) is measured in hours, not minutes, and it's a huge drain on engineering resources.