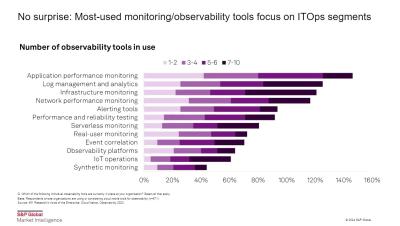

Applying a Data Engineering Approach to Telemetry Data

The exponential growth of telemetry data presents a significant challenge for organizations, who often overspend on data management without fully capitalizing on its potential value. To unlock the true potential of their telemetry data, organizations must treat it as a valuable enterprise asset, applying rigorous data engineering principles to glean the critical insights and accelerated investigations this data is meant to enable. The telemetry data platform approach democratizes access across disciplines and personas and fosters widespread utilization across the organization.