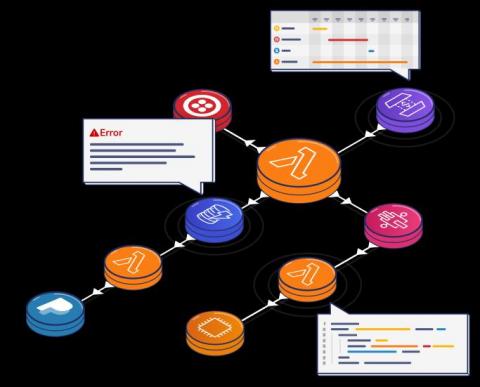

Managing Serverless Flows with AWS Step Functions

This blog post was written jointly by the teams at Lumigo and Cloudway. It’s a step-by-step guide to AWS Step Functions workflows with full monitoring and troubleshooting capabilities. We will use the “right to be forgotten” GDPR workflow as an example application.