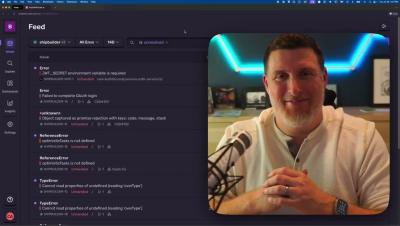

Automated Seer in Under 2 Minutes

What if you had 5 errors, and instead of coming back to 5 issues in your feed, you got 5 pull requests fixing them? Seer is Sentry's new AI Debugging agent. it's able to stitch together all the context from your logs, stack traces, distributed tracing, codebase, and issues and figure out what broke, where, and how to fix it. Seer automation lets you automate that flow - and end up with a nice PR waiting for you to merge if it looks good. Check it out!