Key learnings from the 2026 State of DevSecOps study

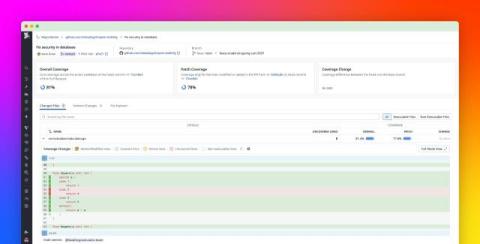

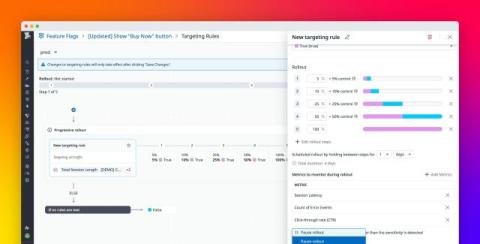

We recently released the 2026 State of DevSecOps study, in which we analyzed tens of thousands of applications and their respective supply chain and build system dependencies. Our research revealed trends in security posture and best practices across the software development life cycle.