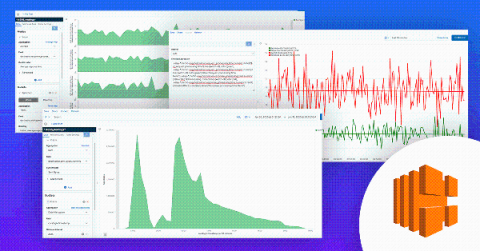

How to get the most out of your ELB logs

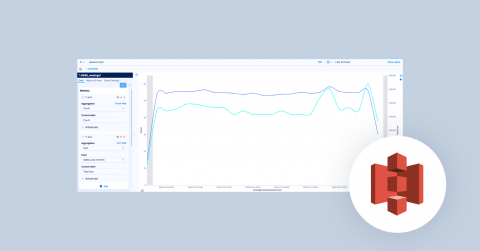

Amazon ELB (Elastic Load Balancing) allows you to make your applications highly available by using health checks and intelligently distributing traffic across a number of instances. It distributes incoming application traffic across multiple targets, such as Amazon EC2 instances, containers, IP addresses, and Lambda functions. You might have heard the terms, CLB, ALB, and NLB. All of them are types of load balancers under the ELB umbrella.