Operations | Monitoring | ITSM | DevOps | Cloud

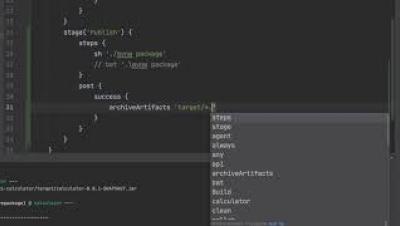

7. How to Package a Java Project in Jenkins

8. How to Automatically Trigger a Pipeline in Jenkins

Are you on top of newly introduced errors in your CI/CD releases?

Log files are infamous for being “noisy”. Without the right management solution, trying to find a specific piece of information or using them to reproduce a critical error is a complex undertaking. If you’re working with CI/CD, how do you attribute new errors to a particular release? How do you investigate those errors and make sure that your customers aren’t being impacted? Faster releases mean shorter development and testing cycles before new code reaches production.

Are you paying too much for your logging solution?

The cost of logging is one of the big problems of a scaled software system. Logging solutions now need to support far more than they ever have. You need to make a real investment in a logging solution that can support these initiatives. However, the up-front costs of a custom-built logging solution are prohibitive for many organizations. No business wants its bottom line affected by logging costs. That’s where Coralogix comes in.

.NET Logging: Best Practices for your .NET Application

Logging is a key requirement of any production application. .NET Core offers support for outputting logs from your application. It delivers this capability through a middleware approach that makes use of the modular library design. Some of these libraries are already built and supported by Microsoft and can be installed via the NuGet package manager, but a third party or even custom extensions can also be used for your .NET logging.

Five things to Log in your CI Pipeline: Continuous Delivery

Logs in continuous delivery pipelines are often entirely ignored, right up until something goes wrong. We usually find ourselves wishing we’d put some thought into our logs, once we’re in the midst of trawling through thousands of lines. In order to try to prevent this, we can add DevOps metrics into our logs, which will provide us with greater observability, and give insight into anything going wrong in our pipelines.

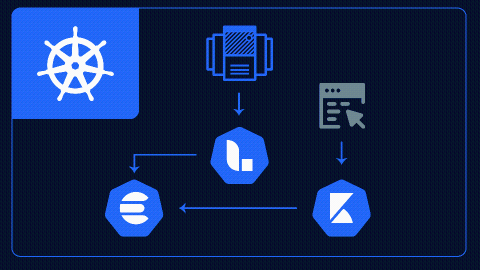

Running Elasticsearch, Logstash, and Kibana on Kubernetes with Helm

Kubernetes (or “K8s”) is an open-source container orchestration tool developed by Google. In this tutorial, we will be leveraging the power of Kubernetes to look at how we can overcome some of the operational challenges of working with the Elastic Stack.

Logging Best Practices: From Simple to Space Age

It is tempting to consider logging as a simple, solved problem. We write a log, check our file and, boom, we’ve cracked it. Yet those of us who have sat up at three in the morning, trawling through log files over an unreliable SSH connection, know that this is simply not enough. As your system scales, so too must the sophistication of your tooling. Your logging best practices must be scalable and ready to support your efforts.

Logstash CSV: Import & Parse Your Data [Hands-on Examples]

The CSV file format is widely used across the business and engineering world as a common file for data exchange. The basic concepts of it are fairly simple, but unlike JSON which is more standardized, you’re likely to encounter various flavors of CSV data. This lesson will prepare you to understand how to import and parse CSV using Logstash before being indexed into Elasticsearch.