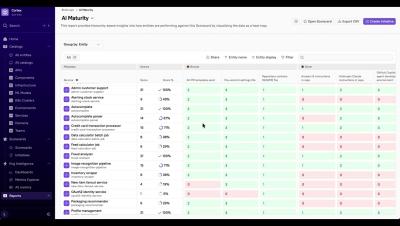

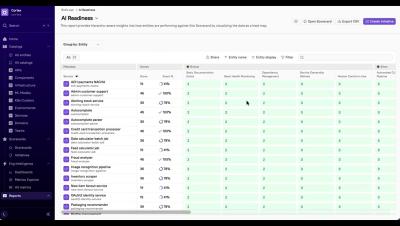

A framework for measuring effective AI adoption in engineering

These days, engineering leaders find themselves caught between a rock and a hard place. On paper, AI adoption looks like an unqualified success. Developers are shipping more code faster than ever, pull request volumes are up, and teams report feeling more productive. Their leaders rush to LinkedIn to share their plans to scale adoption because their teams are just so much more efficient. But then, the incidents and bug reports start piling up.