Operations | Monitoring | ITSM | DevOps | Cloud

The Claude Bill is Too Damn High #speedscale #claude #aiagents #aicoding #devops #llms

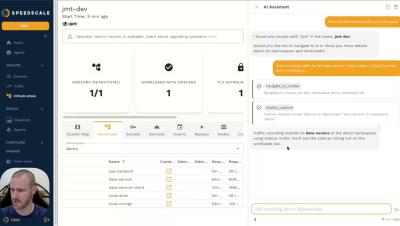

Stop overpaying for AI reasoning by trading expensive GPU cycles for efficient, deterministic testing. This video explores how tools like linters and traffic replay can complement Claude, helping you fix bugs more accurately while cutting token usage by up to 50%. Visit: speedscale.com to learn more.

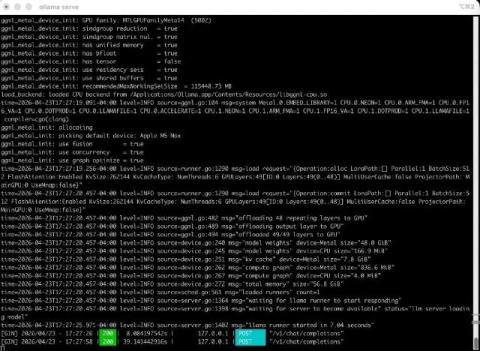

Run Local LLMs on Mac to Cut Claude Costs

Part of the motivation for this post is how cloud API economics are shifting: Anthropic is moving large enterprise customers toward per-token, usage-based billing (unbundled from flat seat fees), which makes “always call the API” a moving cost line for teams at scale. A hybrid or local layer is one way to keep spend bounded while you still use premium models where they matter.

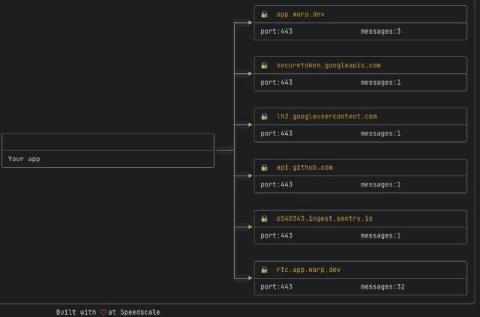

What the Warp Terminal Sends Home

The terminal is a developer’s most trusted tool. It sees your source code, your secrets, the commands you run in production. When a terminal adds AI and cloud features, it’s worth asking what it’s doing on the network.

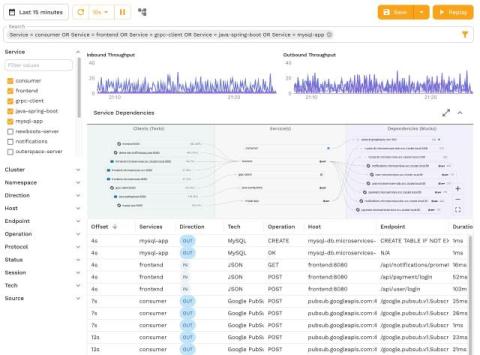

Replace API Synthetics with Traffic Replay

The alert fires at 2 AM. Your observability platform’s synthetic test just failed. Login is broken. So you open your laptop, pull up the dashboard, and stare at a single red dot: the browser test. You know the problem is somewhere in the stack, but not where. Is it the auth service? The token validator? The user profile API? The API gateway timing out? You’re now about to spend the next 45 minutes correlating traces, tailing logs, and manually hitting endpoints until you find it.