Operations | Monitoring | ITSM | DevOps | Cloud

5 Ways You Can Utilize Observability to Make Your Next Migration Easier

When people hear the word “migration,” they typically think about migrating from on-prem to the cloud. In reality, companies do migrations of varying types and sizes all the time. However, many teams delay making critical migrations or technical upgrades because they don’t have the proper tools and frameworks to de-risk the process.

How Traceloop Leverages Honeycomb and LLMs to Generate E2E Tests

At Traceloop, we’re solving the single thing engineers hate most: writing tests for their code. More specifically, writing tests for complex systems with lots of side effects, such as this imaginary one, which is still a lot simpler than most architectures I’ve seen: As you can see, when an API call is made to a service, there are a lot of things happening asynchronously in the backend; some are even conditional.

Observing the Future: The Power of Observability During Development

Just when you thought everything that could be shifted left has been shifted left, we’re sorry to say you’ve missed something: observability. Modern software development—where code is shipped fast and fixed quickly—simply can’t happen without building observability in before deployments happen. Teams need to see inside the code and CI/CD pipelines before anything ships, because finding problems early makes them easier to fix.

All the Hard Stuff Nobody Talks About when Building Products with LLMs

Earlier this month, we released the first version of our new natural language querying interface, Query Assistant. People are using it in all kinds of interesting ways! We’ll have a post that really dives into that soon. However, I want to talk about something else first. There’s a lot of hype around AI, and in particular, Large Language Models (LLMs).

5 Ways to Increase Release Velocity with Observability

The pressure on today’s development teams is real: innovate, release quickly, and then do it all again, only faster. Is it any surprise that studies are showing 83% of software developers are feeling burnout? We’re here to remind you that it doesn’t have to be this way.

Developing with OpenAI and Observability

Honeycomb recently released our Query Assistant, which uses ChatGPT behind the scenes to build queries based on your natural language question. It's pretty cool. While developing this feature, our team (including Tanya Romankova and Craig Atkinson) built tracing in from the start, and used it to get the feature working smoothly. Here's an example. This trace shows a Query Assistant call that took 14 seconds. Is ChatGPT that slow? Our traces can tell us!

5 Ways Honeycomb Saves Time, Money, and Sanity

There’s a reason everyone dreads debugging, especially in today’s complex cloud systems: it’s at the high stakes nexus of nervous senior management, overworked engineers, neverending rabbit holes, copious buckets of time, and fickle customers.

A Systematic Approach to Collaboration and Contributing to the Lattice Design System

The Honeycomb design team began work on Lattice in early 2021. Over several months, we worked to clean up and optimize typography, color, spacing, and many other product experience areas. We conducted an extensive audit of all components, documenting design inconsistencies and laying the foundation for a sustainable design system. However, a more extensive evaluation and audit were necessary before updating or developing components.

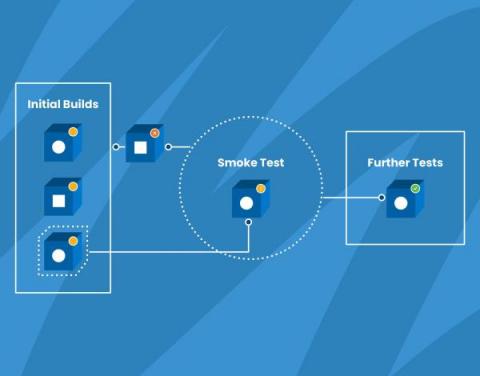

How We Use Smoke Tests to Gain Confidence in Our Code

Wikipedia defines smoke testing as “preliminary testing to reveal simple failures severe enough to, for example, reject a prospective software release.” Also known as confidence testing, smoke testing is intended to focus on some critical aspects of the software that are required as a baseline.

Three Ways to Make the Most out of Honeycomb Metrics

A while ago, we added Metrics to our observability platform so teams could easily see system information right next to their application observability data—no tool or team switching required. So how can teams get the most out of metrics in an observability platform? We’re glad you asked! We had this conversation with experts at Heroku. They’ve successfully blended metrics and observability and understand what is most helpful to know.

Ask Miss O11y: To Metric or to Trace?

Dear Miss O11y, I remember reading quite interesting opinions from you about usage of metrics and traces in an application. Did you elaborate on those points in a blog post somewhere, so I can read your arguments to forge some advice for myself? I must admit that I was quite puzzled by your stance regarding the (un)usefulness of metrics compared to traces in apps in some contexts (debugging).

Errors Got You Down? Honeycomb and OpenTelemetry are Here to Help

It’s 5:00 pm on a Friday. You’re wrapping up work, ready to head into the weekend, when one of your high-value customers Slacks you that something’s not right. Requests to their service are randomly timing out and nobody can figure out what’s causing it, so they’re looking to your team for help. You sigh as you know it’s one of those all-hands-on-deck situations, so you dig out your phone and type the "going to miss dinner" text.

Feature Focus: April 2023

You know the old saying, I’m sure: “April deploys bring May joys.” Okay, maybe it doesn’t go exactly like that, but after reading what we’ve been up to for the past month, I think it might be time for an update. Let’s dive into our Feature Focus April 2023 edition.

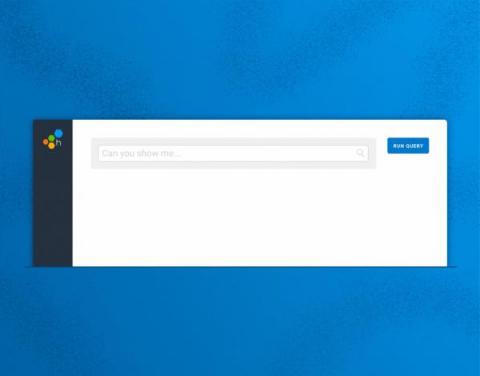

Observability, Meet Natural Language Querying with Query Assistant

Engineers know best. No machine or tool will ever match the context and capacity that engineers have to make judgment calls about what a system should or shouldn’t do. We built Honeycomb to augment human intuition, not replace it. However, translating that intuition has proven challenging. A common pitfall in many observability tools is mandating use of a query language, which seems to result in a dynamic where only a small percentage of power users in an organization know how to use it.

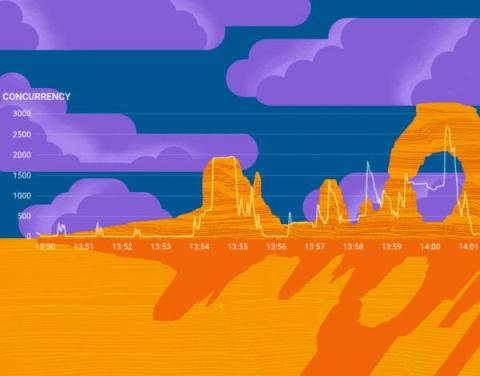

Our Favorite #chArt

Heatmaps are a beautiful thing. So are charts. Even better is that sometimes, they end up producing unintentional—or intentional, in the case of our happy o11ydays experiment—art. Here’s a collection of our favorite #chArt from our Pollinators Slack community. Today would be a great time to join if you’re into good conversation about OpenTelemetry, Honeycomb-y stuff, SLOs, and obviously, art.