Seedance 2.0 vs Traditional Production: Is AI Finally Production-Ready?

Every few years, a new tool appears that forces the creative industry to pause and reassess its assumptions.

In 2026, that conversation is happening again, this time around AI video.

The question is no longer whether AI can generate impressive demo clips. That phase is over. The real question is far more consequential:

Has AI finally become production-ready?

Seedance 2.0 is at the center of that debate.

While many AI video models produce visually striking outputs, most struggle when placed under real production constraints. Long sequences break. Characters drift. Motion loses weight. Continuity collapses.

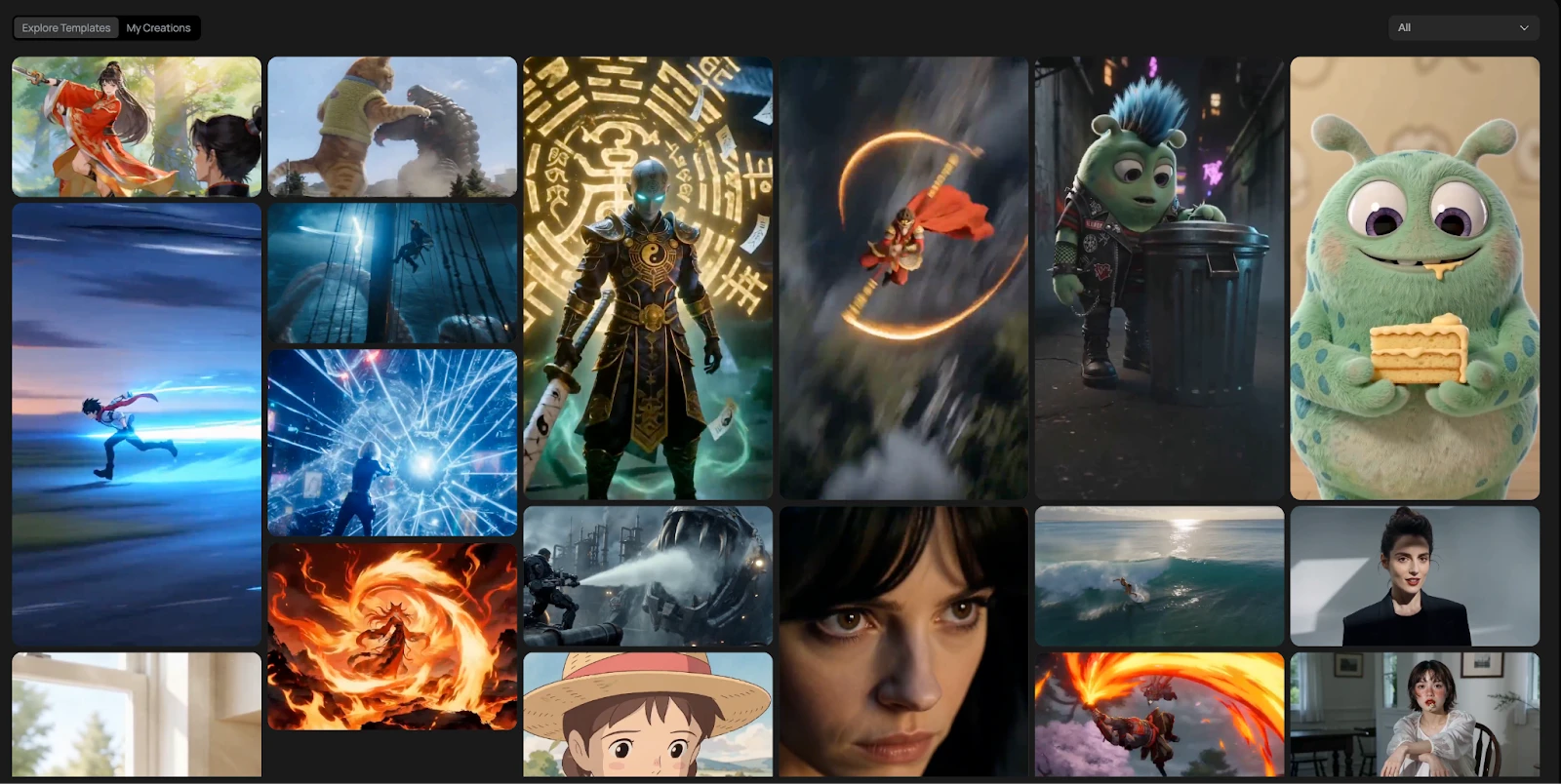

Seedance 2.0 does not just improve visuals. It addresses structural weaknesses that previously kept AI outside serious production pipelines. In this article, we’ve tested Seedance 2.0 via this platform Loova as it offers early access to this model.

To understand why that matters, we need to define what “production-ready” actually means.

What “Production-Ready” Really Means

In professional environments, production-ready does not mean “looks good in a demo.”

It means:

- Stable character identity across scenes

- Controlled and believable camera motion

- Lighting and environmental consistency

- Scene continuity over extended sequences

- Predictable output under structured prompts

- Reduced need for heavy corrective editing

Studios rely on predictability. Schedules are tight. Budgets are structured. Revisions are costly.

AI video tools have historically failed this predictability test.

They impressed visually but introduced uncertainty.

Seedance 2.0 changes that dynamic in measurable ways.

Traditional Production vs AI Workflows

Before examining Seedance 2.0 specifically, it helps to compare traditional production processes with AI-driven ones.

Traditional Production

- Pre-production planning

- Storyboarding

- Shooting

- Reshooting if necessary

- Editing

- Color correction

- Sound design

- Final delivery

This pipeline ensures control. It also introduces cost, time, and logistical constraints.

Early AI Video Models

- Write prompt

- Generate clip

- Regenerate repeatedly

- Patch inconsistencies in editing

- Repeat

The promise was speed. The reality was instability.

Most AI tools shortened certain steps but added others in the form of regeneration and correction.

Seedance 2.0 attempts to close that gap.

Motion Realism: The Hardest Problem in AI Video

Motion has always been the Achilles’ heel of generative video.

Earlier models often produced:

- Floating camera movement

- Weightless characters

- Inconsistent tracking

- Spatial distortion during transitions

These flaws become obvious in longer sequences.

Seedance 2.0 demonstrates stronger physical grounding.

Tracking shots feel intentional rather than drifting. Push-ins carry weight. Movement adheres more convincingly to implied physics.

The improvement is subtle but critical.

Production teams care less about flashy effects and more about believable spatial coherence. Motion that feels grounded reduces the need for stabilization, reframing, and corrective editing.

In professional terms, that means less cleanup.

Character Identity Across Scenes

Character drift has been one of the biggest obstacles to narrative AI video.

A character might look consistent within a single clip but subtly change between scenes:

- Facial proportions shift

- Clothing details alter

- Hair texture varies

- Body proportions drift

These inconsistencies break immersion and complicate storytelling.

Seedance 2.0 significantly reduces identity drift.

When a character is introduced in one scene, their defining features persist more reliably into the next. This stability enables multi-scene narratives without constant regeneration.

For episodic creators and branded storytelling teams, this is not a cosmetic improvement. It is foundational.

Without identity stability, continuity editing becomes impossible.

Scene Continuity and Temporal Logic

Production-ready video must understand sequence.

Earlier AI models often treated prompts as independent frames. If a prompt described a beginning, transition, and final shot, the model might generate visually impressive fragments — but not a coherent progression.

Seedance 2.0 shows improved temporal logic.

It demonstrates awareness that scenes connect.

While not perfect, it better preserves:

- Order of described events

- Camera movement continuity

- Environmental consistency

This allows creators to build structured sequences instead of stitching unrelated clips.

In production terms, that reduces fragmentation.

Longer Sequences Without Structural Collapse

Short-form AI video has been impressive for years.

The real stress test is duration.

When clips extend beyond a few seconds, many models deteriorate:

- Motion begins to drift

- Characters morph subtly

- Backgrounds shift

- Lighting changes unpredictably

Seedance 2.0 performs more reliably in extended sequences.

This does not mean it replaces traditional filmmaking. It means it holds together long enough to function in:

- YouTube storytelling

- Product demonstrations

- Narrative explainers

- Concept visualizations

The ability to maintain structure across longer clips is one of the clearest indicators of production maturity.

Integrated Audio Alignment

Audio synchronization has often been treated as secondary in AI video generation.

Many earlier systems produced voice and visuals independently, leading to:

- Slight timing mismatches

- Unnatural dialogue pacing

- Poor lip-sync alignment

Seedance 2.0 improves alignment between generated audio layers and visual timing.

It does not eliminate the need for professional sound design, but it narrows the gap.

For online content production, this level of synchronization is often sufficient without extensive post-production.

Workflow Efficiency: Where the Real Shift Happens

Visual improvements are important. Workflow improvements are transformative.

Production-ready AI is not defined solely by output quality. It is defined by how much correction is required after generation.

Seedance 2.0 reduces:

- Full-scene regeneration

- Character resets

- Motion correction

- Continuity patching

Instead of rebuilding entire sequences, creators refine. That shift changes the role of AI in production. It moves from experimental tool to structural component.

Practical Integration in Modern Creative Pipelines

A model alone does not determine usability. Integration matters.

Seedance 2.0 can be accessed within platforms like Loova, where it operates alongside editing and image-generation tools in a unified workspace.

This integration matters because it removes fragmentation:

- Text-to-video and image-to-video generation

- Motion-based tools

- Thumbnail image creation

- Editing adjustments

Instead of exporting between systems, teams maintain continuity inside one environment.

Production readiness is not only about output quality. It is about pipeline compatibility.

Can Seedance 2.0 Replace Traditional Production?

The honest answer is nuanced.

Seedance 2.0 does not eliminate the need for:

- Large-scale cinematic shoots

- Complex live-action choreography

- High-end VFX pipelines

However, it meaningfully replaces or accelerates:

- Pre-visualization

- Online marketing video production

- Social storytelling

- Mid-budget branded content

- Concept development

For independent creators, it lowers the barrier to professional aesthetics.

For studios, it introduces a new layer in the pipeline.

Why Studios Are Paying Attention

Large studios prioritize control and predictability. When AI models begin delivering stable characters, controlled motion, reliable sequence continuity, they stop being novelties, they become strategic tools.

Studios are not necessarily afraid of AI replacing them. They are watching how it integrates into existing production structures.

Seedance 2.0 signals that AI is moving closer to structural reliability.

And reliability is what unlocks adoption.

The Broader Industry Shift

The narrative around AI video has shifted from spectacle to stability. Early models aimed to impress. Newer models aim to endure.

Seedance 2.0 represents this transition.

It shows that generative video is evolving from isolated frame generation to structured cinematography. That evolution does not end traditional production. It expands it.

Final Assessment: Is AI Finally Production-Ready?

Production readiness is not binary.

Seedance 2.0 does not perfectly replicate traditional filmmaking. But it crosses a critical threshold.

It demonstrates:

- Motion realism with weight

- Character stability across scenes

- Improved temporal logic

- Reduced corrective friction

Those improvements mark a shift from experimentation toward integration. AI video is no longer an outsider to production workflows. It is becoming part of them.

And once tools reach that point, adoption accelerates.

Seedance 2.0 may not replace studios. But it proves that AI video is no longer playing in a sandbox. It is stepping onto the stage.