Is GPTHumanizer AI Legit? An Honest Hands-On Review (2026)

Getting flagged as “AI” is the worst

You write a draft blog with ChatGPT.

You’re happy with it.

Then a detector slams you in the face with a “Likely AI Generated” label.

But the worst part? It doesn’t have to be bad content. Sometimes it’s just… too smooth. too consistent. too ordinary. And too difficult to attract attention from readers.

This market is now jam-packed with AI humanizers that are all basically the same: "make your writing more natural, make your writing more readable, make your writing sound “more human.”"

So I stopped listening to promises and ran a real test.

I tested GPTHumanizer AI, a pretty widely used free AI humanizer, in the same way I’d test any tool I might actually use: I pasted real drafts in, ran it once, and looked to see what actually changes on the page.

Why this matters more after Google’s December update

If you’re publishing SEO content, December 2025 was a vibe check.

And whether you are an AI user or not, the message is crystal clear: Pages that look mass-produced and low-value are the ones that get squeezed.

As well, Google's attitude towards AI is much more realistic than people think. AI is not "banned." However, it is possible to violate spam policies (scaled content abuse) by generating a lot of low-veiled pages WITHOUT adding value might trip spam policy.

That was my thought process for this review: not "can it do magic," but rather "can it make a draft read like a human?"

My test setup (simple, repeatable)

I used three short drafts I’d realistically publish:

- An academic paragraph (about 80 words).

- A blog paragraph (about 100 words).

- A business email (about 90 words).

I ran Lite / Pro / Ultra once each. One pass per tier. No cherry-picking.

Then I checked Zerogpt before vs after, and I judged the output like a colleague would: “Would you publish this?”

Results (before vs after)

Here’s the deal: I didn’t want vibes. I wanted numbers and readability.

So for each draft, I checked Zerogpt before and after, then scored the output like I’d score a coworker’s draft: would I publish it, and did it keep the original meaning?

Zerogpt AI probability (Before → After) is the “AI rate change” here.

|

Draft type |

Words |

Model used |

Quality (1–10) |

Meaning preserved |

Grammar/typos |

Zerogpt AI% (Before → After) |

Change |

|

Academic paragraph |

80 |

Lite |

9 |

High |

Occasionally |

100% → 0% |

100% |

|

Blog paragraph |

100 |

Pro |

8 |

Medium |

Occasionally |

100% → 0% |

100% |

|

Business email |

90 |

Ultra |

6 |

Medium |

Occasionally |

_97% → _89% |

8% |

What struck me right away: when GPTHumanizer “hits,” it’s not because it sprinkles in more sophisticated word choices.

It’s because it stops writing in a template voice.

The cadence becomes less even. The transitions have less copy-paste. The paragraph just kind of expands a little, and that’s usually what moves both the reading feel and the AI% in the right direction.

Test sample (Academic — ~80 words, before humanize)

Below is the exact kind of “too smooth, too uniform” paragraph I used. It’s clean, but it reads a little like a template, perfect for a detector to get jumpy.

Academic Sample:

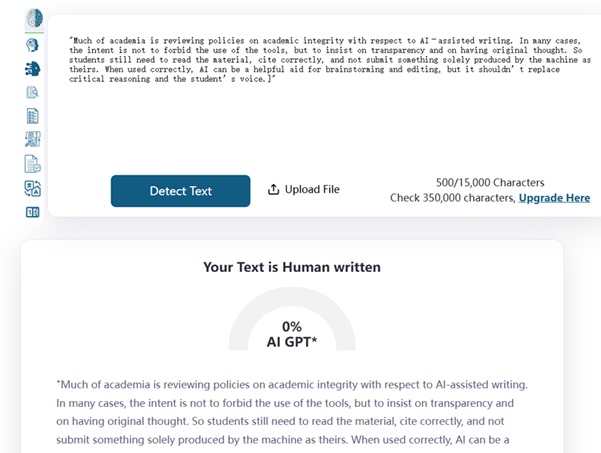

"Many universities are updating academic integrity policies to address AI-assisted writing. In most cases, the goal is not to ban tools outright, but to require transparency and preserve original thinking. Students still need to understand the material, cite sources correctly, and avoid submitting machine-generated work as their own. Used responsibly, AI can support brainstorming and editing, but it should not replace critical reasoning or the student’s voice."

ZeroGPT Result (before and after humanize)- 100% AI→0% AI

Test sample (Blog — ~100 words, before humanize)

Here’s the kind of blog paragraph I used: it’s “fine,” but it has that classic AI cadence—neatly balanced sentences, predictable transitions, and a slightly generic tone. That’s usually enough to make detectors twitch.

Blog Sample:

"AI writing tools have become increasingly popular for creating blog content quickly and efficiently. However, AI-generated text can sometimes sound repetitive, overly formal, or lacking in a natural flow. This is why many writers use AI humanizers to improve readability and make content sound more human. By adjusting sentence structure, word choice, and tone, an AI humanizer can help remove common AI patterns while preserving the original meaning. As a result, the final draft may feel more engaging to readers and less likely to be flagged by AI detectors."

ZeroGPT Result (before and after humanize)- 100% AI→0% AI

Test sample (Business Email — ~90 words, before humanize)

Here’s the kind of business email I used: it’s polite and clear, but it has that classic AI “corporate template” feel, overly smooth phrasing, safe generic wording, and predictable structure. It reads fine, but it doesn’t sound like a real person who actually wants a reply.

Business Email Sample:

"Hi Bob,

I hope you are doing well. I’m reaching out to follow up on our previous conversation and see if you had any updates regarding the proposal I sent last week. Please let me know if you have any questions or if you would like me to provide additional information. I’m happy to schedule a quick call at your convenience to discuss next steps. Thank you for your time, and I look forward to hearing from you."

ZeroGPT Result (before and after humanize)- 97% AI→89% AI

What GPTHumanizer AI does well

Here’s where it worked.

GPT Humanizer AI didn’t “upgrade vocabulary” on the academic and blog samples. It altered the shape of the writing. The cadence became less evenly spaced, the transitions less copy-paste, the paragraph actually looked revised rather than written in one go.

If your draft sounds like it uses a template voice, GPTHumanizer can really erase that for you, and do it fast.

What GPTHumanizer AI still weak

The short version: it doesn’t always move the detector needle, especially on shorter text.

My business email test is a good example. After Ultra, the email did read more like a real person wrote it, less stiff, less corporate boilerplate. But the AI rate barely changed (97% → 89%). That’s still high.

So if you’re expecting every short email to flip from “AI” to “human,” you’ll be disappointed sometimes.

GPTHumanizer can improve readability without always collapsing the AI score, especially on short, formal emails.

Who this is for (and who should skip it)

I think it makes sense if you publish blogs/long form content. That's where I thought the biggest difference lies, both to the reading feel and to the detector's results.

If you're doing short business emails, it is hit or miss. Your email might sound better but you still get a high AI percent after.

Bottom line: it's better for blog/academic-type paragraphs. Not so reliable for short formal emails.

Final verdict: is GPTHumanizer AI legit?

Yeah.

Not because it makes promises. Because when you test it for real and not an easy click of the convert button, it can take that “too smooth, too consistent” AI draft and make it read more human, and in my longer samples, it even got ZeroGPT from 100% AI to 0% AI, which is really hard to ignore.

Just keep your expectations realistic. Sometimes you win on readability and you don’t win on AI rate and the email test kind of told you that.

Bottom line

If you want a practical tool to reduce the “AI vibe” in longer writing, it’s worth using. If you want “every short email must show 0%”, this won’t always work.