AI Synergy: Using GPT, LLaMA, and PaLM Models

Image Source: depositphotos.com

The public release of GPT in 2023 ushered in a new era of Generative AI applications in business. ChatGPT by OpenAI, LLaMA by Meta AI, PaLM by Google AI, and other text generation models are now widely utilized for both personal and professional applications. The Generative AI market is expected to reach $66.62 billion by 2024, with an annual growth rate of 20.80%, leading to a market volume of $207.00 billion by 2030.

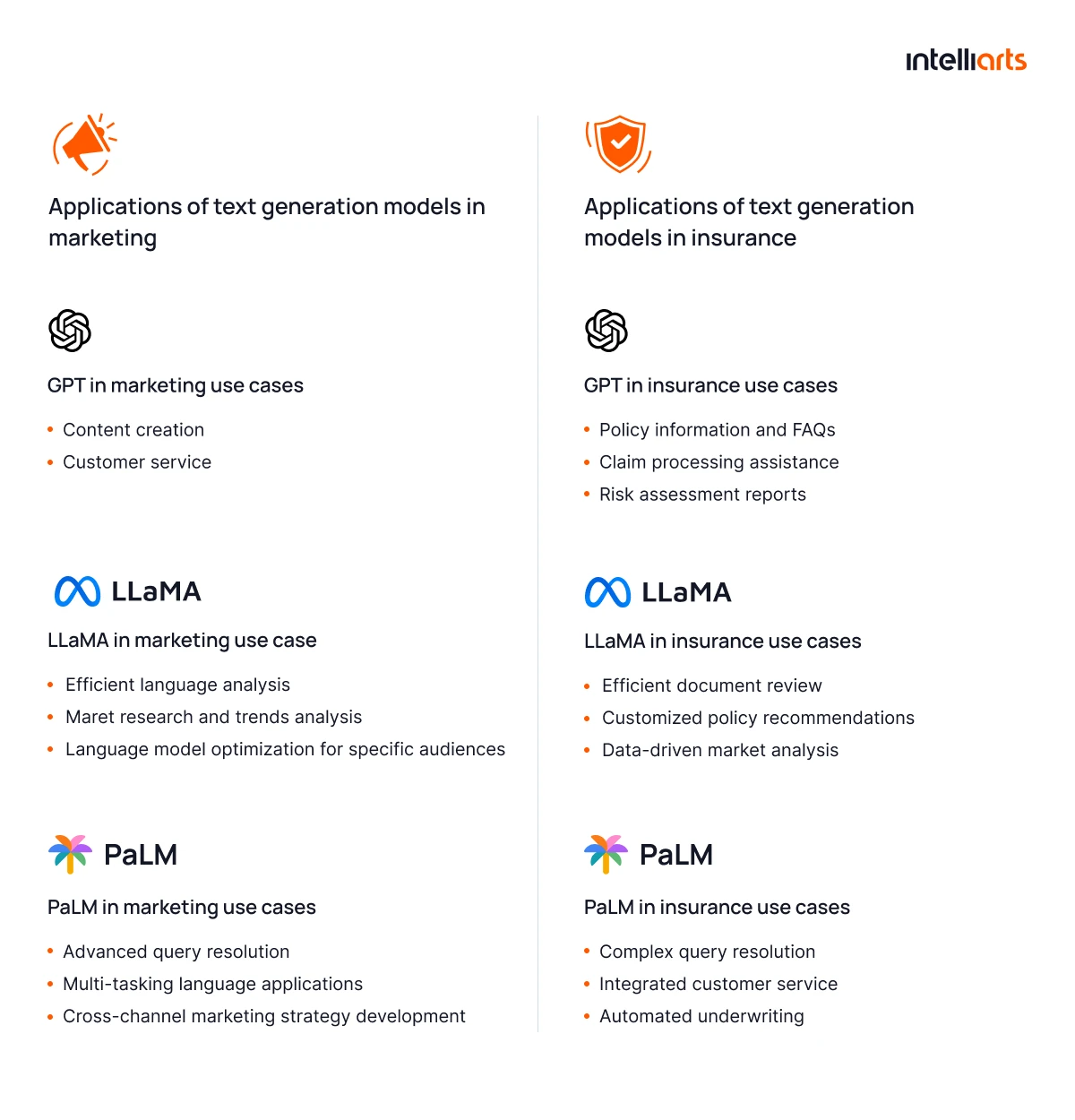

In this post, you’ll learn about the potential uses of text generation models and explore a real-world example of applying a text generation model in business. Specifically, we’ll take two industries, marketing and insurance, to dive deep into the use cases of ChatGPT, LLaMA, and PaLM.

GPT/ LLaMA/ PaLM text generation models: What is the difference?

Let’s get started by defining a text generation model:

The text generation model is an ML-based solution that automatically creates human-like text based on input data and pre-trained language patterns.

The main differences between GPT, LLaMA, and PaLM text generation models lie in their scale, i.e., the number of parameters and their underlying architectures. Besides:

- GPT models are often used in applications like chatbots, content generation, and language translation

- LLaMA is designed to be a research tool that helps in understanding the workings of large language models and improving them

- PaLM is designed to demonstrate the capabilities of the Pathways system, which aims to create more general and efficient AI models

Each model represents a different approach to solving the challenges of natural language processing and generation.

“When selecting between GPT, LLaMA, and PaLM, the real differentiator is understanding how each model behaves in your specific workflow. Our customers always see the best results when they treat LLMs as configurable tools rather than one-size-fits-all solutions. Even small adjustments to prompting, context design, or fine-tuning can dramatically shift model performance in real business scenarios.” — Volodymyr Mudryi, Data Science and ML Engineer at Intelliarts

Applications of text generation models in marketing

Despite large language models (LLMs) being a relatively new, publicly available technology, they already have a broad range of applications in marketing, including:

#1 GPT in marketing use cases

Here are some of the possible applications of the GPT models in marketing and ways of using ChatGPT for marketing software:

- Content creation: Create blogs, social media posts, and other types of long and short-form content. Check this example of a basic prompt: “Create a captivating social media post to promote our latest sofa collection. Emphasize style, comfort, and versatility. Maximum 30 words.”

- Customer service: Power up chatbots for 24/7 customer support and answering FAQs. Here’s an example of a prompt: “Develop a compelling chatbot script to showcase product features, answer queries, and guide users through the sales process. Limit: 50 words.”

#2 LLaMA in marketing use cases

Examples of LLaMa use cases in marketing include:

- Efficient language analysis: Analyze large datasets like customer feedback or academic sets although lacking computational resources. Review this example of a prompt: “Summarize these 50 customer reviews. Focus on sentiment analysis, key themes, and suggest potential improvements.”

- Market research and trends analysis: Analyze consumer trends, social media sentiments, and market research data for actionable insights. Look through this example of a basic prompt: “Conduct trends analysis in video marketing for street food. Seek insights on emerging content formats and audience preferences. Provide 5 recommendations for optimizing video marketing campaigns.”

#3 PaLM in marketing use cases

The ways to exploit PaLM models in marketing are the following:

- Advanced query resolution: Address intricate, multistep customer queries that demand understanding of the context and its nuances. See the prompt example: “Explain the difference between different property type sectors for those with limited knowledge of real estate. Do not use complex terms and definitions.”

- Multi-tasking language applications: Manage assignments that require transitioning between languages or incorporating diverse data types, such as text and numbers. Consider this example of a basic prompt: “Create a report that merges financial statement analysis and reporting notes for an annual business plan.”

It is critical to understand that all three text generation models can effectively cover all of the applications described above. However, we assigned one or more use cases to models that are a better fit for them, taking into account their unique characteristics.

Applications of text generation models in insurance

Let’s delve into several key areas where text generation models in insurance are particularly useful:

#1 GPT in insurance use cases

Here are some of the possible applications of the GPT models in insurance:

- Policy information and FAQs: Provide comprehensive policy details and address FAQs on insurance websites. Here’s a prompt example: “Explain the difference between various car insurance types. Provide insights on coverage variations, benefits, and considerations for factors like comprehensive, liability, and collision insurance. Limit: 50 words per each type.”

- Claim processing assistance: Support customers during claim processing, offering guidance on necessary steps and documentation. Check a basic prompt example: “Create step-by-step guidance for checking the status of an insurance claim. Provide information on how to access relevant platforms and interpret the status updates.”

#2 LLaMA in insurance use cases

Examples of LLaMa models usage in insurance include:

- Efficient document review: Quickly examine and sum up lengthy insurance documents and policy agreements. Explore the example of a prompt: “Sum up a 100-page accident report to use for insurance purposes. Make emphasis on causes and outcomes and ensure the summary captures the essential information for quick understanding.”

- Customized policy recommendations: Provide personalized insurance policy suggestions derived from customer profiles and historical data. See the next example of a basic prompt: “Provide a home insurance policy recommendation for a family with 2 children.”

#3 PaLM in insurance use cases

The ways to exploit PaLM models in insurance are the following:

- Complex query resolution: Manage complex customer queries that require understanding and clarification of intricate insurance terms and conditions. Look at the next prompt example: “Clarify the difference between an actuary and an underwriter. Explain their roles, responsibilities, and how their contributions differ in the insurance industry.”

- Integrated customer service: Deliver extensive customer service by integrating information from various departments like claims processing and sales. Take a look at this example of a basic prompt: “Craft a consolidated reply for a customer asking about premium adjustments.”

Pro tips to use when working with any AI-based model for the best outcomes:

#1. Be specific. Clearly define the context and expected results, being cautious when asking for statistical data as Generative AI is prone to generate abstract data.

#2. Illustrate with examples. Enhance the likelihood of success by offering references for better understanding.

#3. Use AI responsibly. Automate mundane tasks, but review outcomes and personally adjust sensitive information.

Final take

The integration of text generation models like GPT, LLaMA, and PaLM in business represents a significant leap in operational efficiency and customer engagement. AI-powered solutions have various use cases, from content creation to customer service to risk assessment to customized policy development.

Be sure to automate your tasks and use LLMs, but do it right. Choose the model based on its characteristics and your goals and master writing prompts for better efficiency.

Author Bio:

Having a strong background in data science and software engineering, Oleksandr Stefanovskyi is heading the Intelliarts machine learning team. He gravitates toward data-heavy projects and follows the principles of CRISP-DM methodology.