Operations | Monitoring | ITSM | DevOps | Cloud

Google Operations

New observability features for your Splunk Dataflow streaming pipelines

We’re thrilled to announce several new observability features for the Pub/Sub to Splunk Dataflow template to help operators keep a tab on their streaming pipeline performance. Splunk Enterprise and Splunk Cloud customers use the Splunk Dataflow template to reliably export Google Cloud logs for in-depth analytics for security, IT or business use cases.

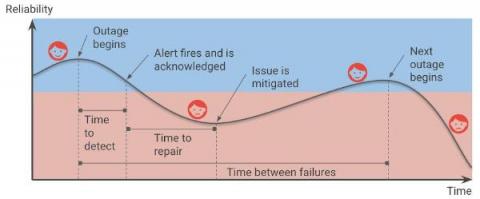

Are your SLOs realistic? How to analyze your risks like an SRE

Setting up Service Level Objectives (SLOs) is one of the foundational tasks of Site Reliability Engineering (SRE) practices, giving the SRE team a target against which to evaluate whether or not a service is running reliably enough. The inverse of your SLO is your error budget — how much unreliability you are willing to tolerate.

Utilizing IT Operations to Drive Informed Business Decisions with Broadcom Software

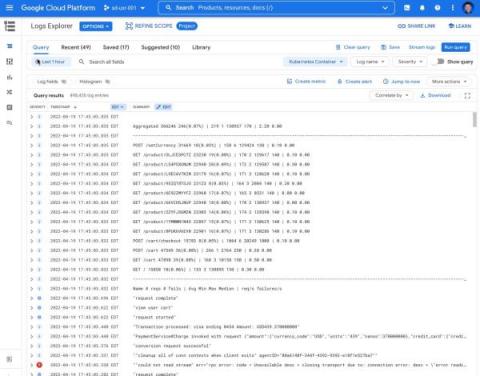

Announcing new simple query options in Cloud Logging

When you’re troubleshooting an issue, finding the root cause often involves finding specific logs generated by infrastructure and application code. The faster you can find logs, the faster you can confirm or refute your hypothesis about the root cause and resolve the issue! Today, we’re pleased to announce a dramatically simpler way to find logs in Logs Explorer.

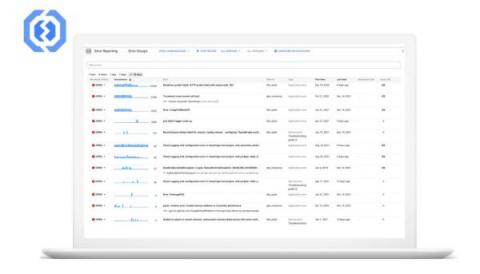

Deliver exception messages through Slack and Webhooks for fast resolution

Building new applications is a lot of fun, but troubleshooting and fixing the crashes that can come with app development is not. While many organizations are fast adopting the DevOps model, there are still some legacy frameworks where developers and operations teams are separate. Developers build and submit apps to their ops team, who in turn deploy and maintain the production stack. A common issue that arises due to this workflow is the time it takes to find and resolve crashes.

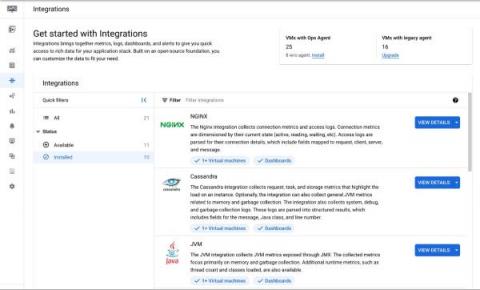

Application observability made easier for Compute Engine

When IT operators and architects begin their journey with Google Cloud, Day 0 observability needs tend to focus on infrastructure and aim to address questions about resource needs, a plan for scaling, and similar considerations. During this phase, developers and DevOps engineers also make a plan for how to get deep observability into the performance of third-party and open-source applications running on their Compute Engine VMs.

How to set up Prometheus monitoring for your services

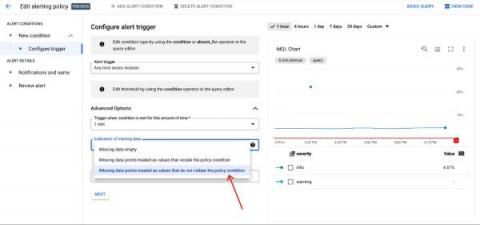

Add severity levels to your alert policies in Cloud Monitoring

When you are dealing with a situation that fires a bevy of alerts, do you instinctively know which alerts are the most pressing? Severity levels are an important concept in alerting to aid you and your team in properly assessing which notifications should be prioritized. You can use these levels to focus on the issues deemed most critical for your operations and triage through the noise.

Get more insights from your Java applications logs

Today it is even easier to capture logs in your Java applications. Developers can get more data with their application logs using a new version of the Cloud Logging client library for Java. The library populates the current executing context implicitly with every ingested log entry. Read this if you want to learn how to get HTTP requests and tracing information and additional metadata in your logs without writing a single line of code.